Multiple AI models working together in real time, combining speed, cost efficiency, and capability within a single coordinated workflow. Image Source: DALL·E via ChatGPT (OpenAI)

OpenAI Launches GPT-5.4 Mini and Nano for Faster, Lower-Cost AI Workflows

OpenAI has introduced GPT-5.4 mini and GPT-5.4 nano, two smaller models designed to bring more of the GPT-5.4 family’s capabilities into faster, lower-cost workflows for coding, subagents, and real-time applications.

This matters because AI development is increasingly shaped by speed, cost, and responsiveness—not just raw model intelligence—especially in systems where latency directly impacts the user experience.

OpenAI presents these models as part of a more unified workflow, where larger models handle planning and judgment while smaller models execute supporting tasks quickly and efficiently, reducing the need to switch between separate systems.

This change primarily affects developers, product teams, and enterprises building AI-powered applications, particularly those managing real-time interactions, automation pipelines, and high-frequency workloads.

In short: OpenAI is expanding the GPT-5.4 lineup with smaller models designed to keep AI workflows fast, scalable, and cost-efficient without sacrificing too much capability.

GPT-5.4 mini and GPT-5.4 nano are smaller, faster, and more cost-efficient versions of GPT-5.4 designed to support coding, subagents, and real-time AI applications within a unified workflow.

Key Takeaways: OpenAI GPT-5.4 Mini and Nano Capabilities and Use Cases

GPT-5.4 mini and GPT-5.4 nano are smaller, faster, and lower-cost AI models designed to support coding, subagents, and real-time AI workflows within the GPT-5.4 system.

OpenAI GPT-5.4 mini improves on GPT-5 mini across coding, reasoning, multimodal understanding, and tool use, while running more than 2× faster and approaching GPT-5.4-level performance on key benchmarks

OpenAI GPT-5.4 nano is the smallest and lowest-cost model in the GPT-5.4 family, optimized for classification, data extraction, ranking, and lightweight coding subagent tasks

GPT-5.4 mini and nano are designed for subagents and multi-model workflows, enabling larger models to handle planning while smaller models execute tasks quickly and in parallel

The models are optimized for latency-sensitive AI applications, including coding assistants, real-time AI agents, and computer-use systems that interpret screenshots and interfaces

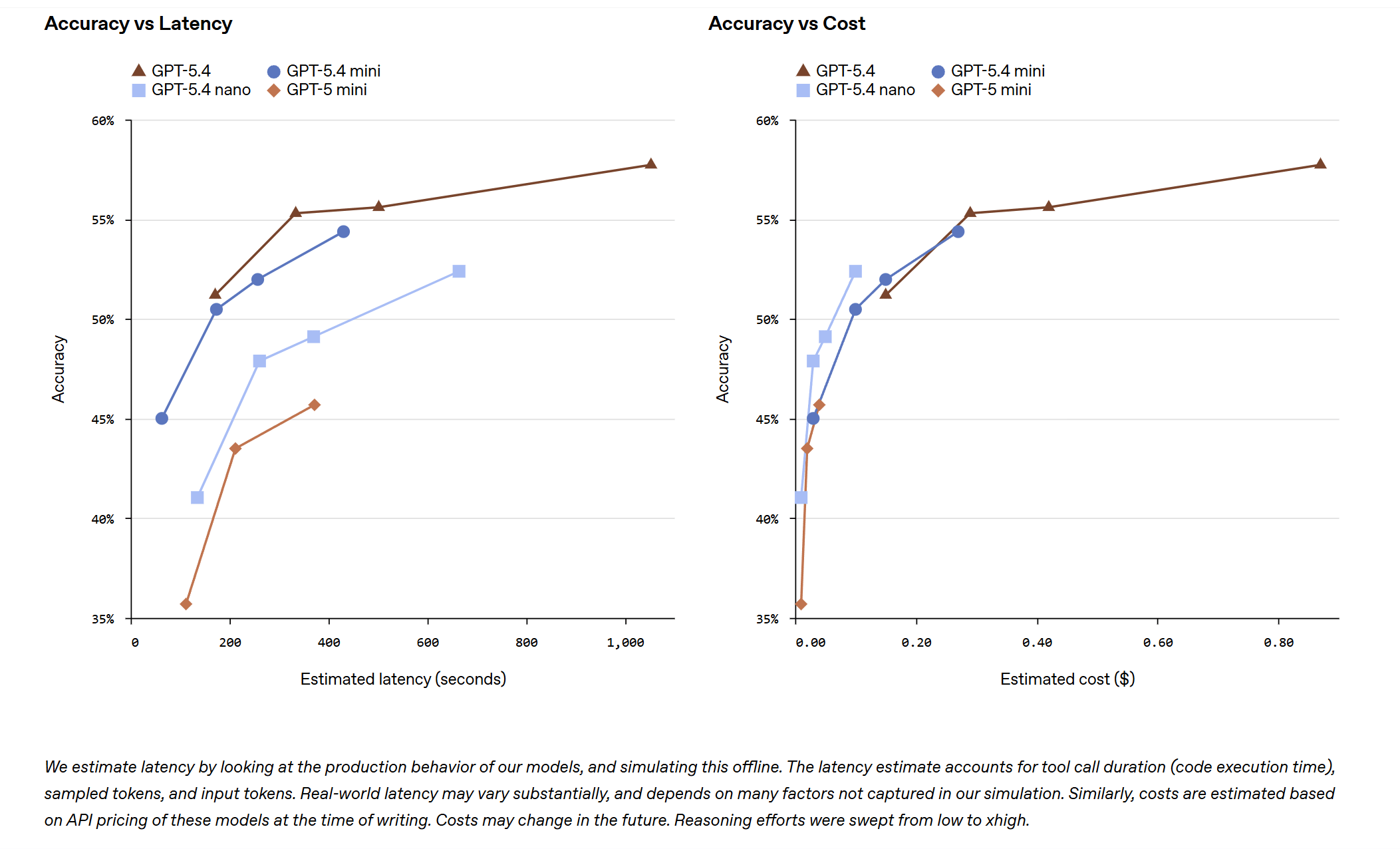

Benchmark data shows GPT-5.4 mini outperforming GPT-5 mini at similar latencies, supporting its role as a production-ready model for fast, cost-efficient AI workflows

The release reflects a broader move toward multi-model AI systems, where smaller models handle execution and larger models handle reasoning, changing how developers design scalable AI workflows

OpenAI GPT-5.4 Mini and Nano: Faster, Lower-Cost Models for Latency-Sensitive AI Workflows

OpenAI’s latest release highlights a practical reality in AI development: the most effective model is not always the largest one, especially in latency-sensitive environments where latency—how quickly a system responds—directly affects the user experience.

GPT-5.4 mini and nano are designed for high-volume, latency-sensitive workloads, bringing many of the capabilities of GPT-5.4 into faster, more efficient models that can operate at lower cost and higher scale.

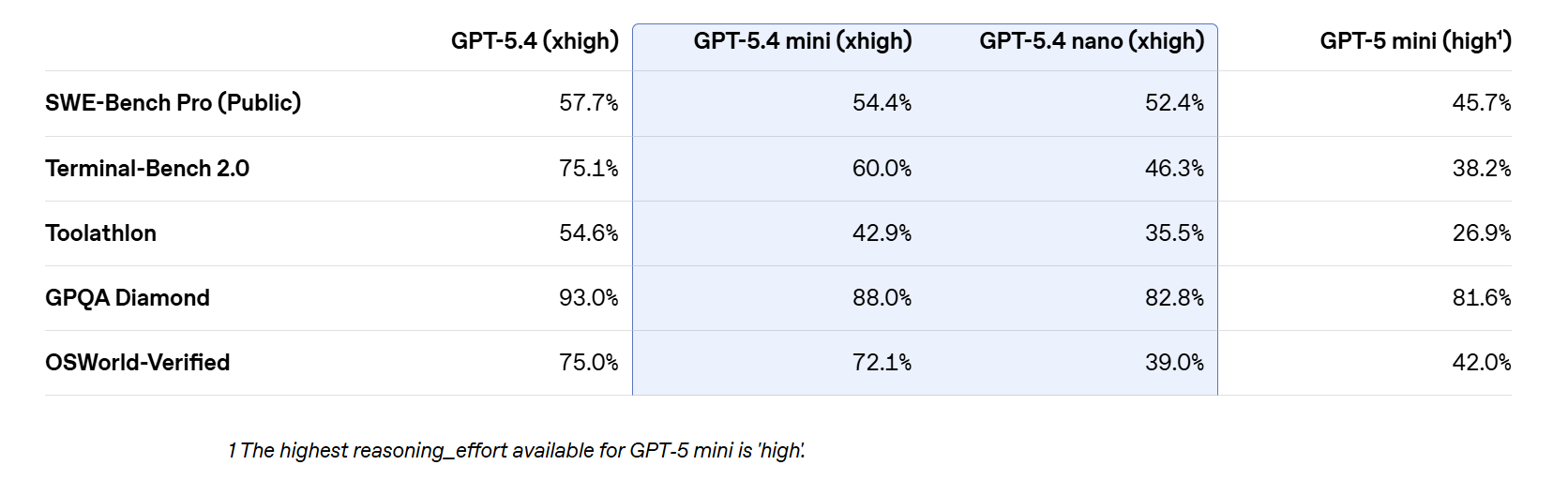

GPT-5.4 mini delivers clear gains over GPT-5 mini, with improved performance in coding, reasoning, multimodal understanding, and tool use, while operating at more than twice the speed. It also approaches the performance of the larger GPT-5.4 on evaluations such as SWE-Bench Pro and OSWorld-Verified, reinforcing its role as a practical model for everyday development and agent workflows.

GPT-5.4 nano, by contrast, is the smallest and lowest-cost model in the GPT-5.4 family, designed for high-volume tasks where speed and cost efficiency are the primary constraints. It is intended for classification, data extraction, ranking, and lightweight coding subagent tasks that support larger workflows.

These models are especially relevant for real-time AI applications, including coding assistants that must feel responsive, subagents completing targeted tasks in parallel, and systems that interpret screenshots or complex interface elements in real time. In these environments, speed and reliability often matter more than maximizing reasoning depth at every step.

Rather than adding two more model options, OpenAI is giving developers a practical way to stay within the same model family while assigning different types of work to models based on their speed and capability—reducing the need to switch between separate systems or overuse a larger model for every task.

GPT-5.4 Mini for Coding Workflows: Faster, Low-Latency AI Development

GPT-5.4 mini and nano are designed to support coding workflows where fast iteration and responsiveness are critical. The models can handle targeted edits, navigate large codebases, generate front-end components, and support debugging loops with low latency—making them well suited for development environments where delays can slow progress.

OpenAI highlights GPT-5.4 mini in particular as a strong option for these workflows, delivering improved performance over GPT-5 mini while maintaining faster response times. This combination allows developers to move through coding tasks more quickly and keep workflows responsive without relying on larger, more resource-intensive models for every step.

Benchmark results shared by OpenAI show GPT-5.4 mini outperforming GPT-5 mini at similar latencies while approaching GPT-5.4-level performance, providing measurable validation of its role as a production-ready model for fast, cost-efficient coding workflows.

That performance shows up in real-world usage. As Brittany O’Shea, Senior Director of Product at GitHub, explained:

“GPT-5.4 mini raises the bar for everyday developer speed. In our evaluations, it leads on time to first token among OpenAI’s top mini models, navigates codebases with ease, and performs exceptionally well with grep-style workflows."

In practice, this means that for many development tasks, speed and responsiveness matter as much as raw capability. Models like GPT-5.4 mini allow teams to complete more work within tighter feedback loops, improving productivity while keeping costs under control.

GPT-5.4 Mini Subagents: Faster Task Execution in Multi-Model AI Systems

GPT-5.4 mini is designed to support subagent workflows, where AI systems combine models of different sizes to handle tasks at different levels of complexity.

In these systems, a larger model such as GPT-5.4 handles planning, coordination, and final judgment, while smaller models like GPT-5.4 mini execute supporting tasks in parallel. These tasks can include searching a codebase, reviewing files, or processing documents—work that benefits from speed and efficiency rather than deep reasoning.

This approach reflects a growing pattern in AI system design: instead of relying on a single model for everything, developers can build coordinated systems where decision-making and execution are separated. Larger models determine what needs to be done, while smaller models carry out the work quickly at scale.

As smaller models become faster and more capable, this architecture becomes more practical. It allows teams to reduce reliance on larger models for routine tasks while improving responsiveness and controlling costs across multi-step workflows.

Abhisek Modi, AI Engineering Lead at Notion, described how this plays out in real-world systems:

“GPT-5.4 mini handles focused, well-defined tasks with impressive precision. For editing pages, it matched and often exceeded GPT-5.2 on handling complex formatting at a fraction of the compute. Until recently, only the most expensive models could reliably navigate agentic tool calling. Today, smaller models like GPT-5.4 mini and nano can easily handle it, which will let our users building Custom Agents on Notion pick exactly the amount of intelligence they need.”

Performance data supports that shift. As Bertie Vidgen, AI Research at Mercor, noted:

“GPT-5.4 mini delivers strong performance on agentic workloads. In our APEX-Agents evaluations, the model outperformed Gemini 3 Flash and Sonnet 4.6 on agentic tasks, showing meaningful gains when higher reasoning effort and token budgets are applied.”

The takeaway is clear: smaller models are no longer just fallback options—they are becoming core components of agent-based systems, handling meaningful work inside larger AI workflows.

GPT-5.4 Mini for Computer Use: Faster Multimodal AI for Real-Time Interface Interaction

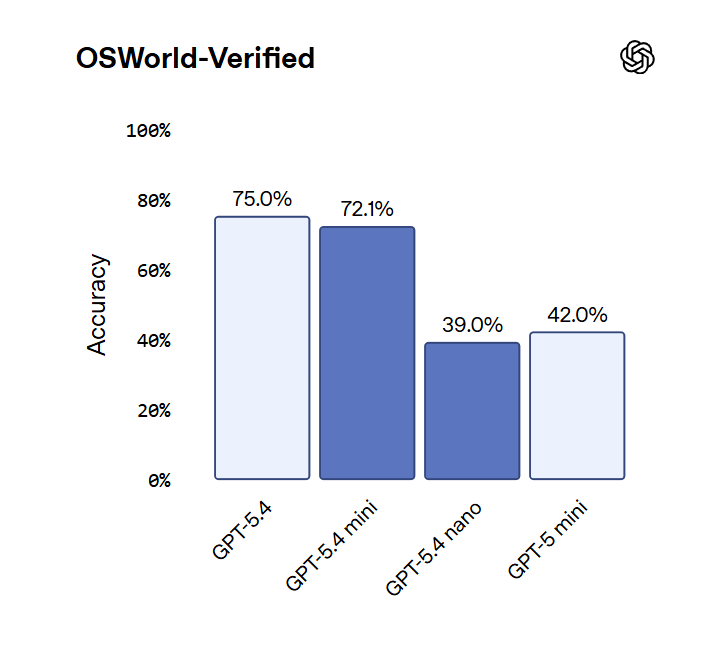

GPT-5.4 mini also delivers strong performance on multimodal tasks, particularly in computer-use scenarios where the model must interpret screenshots of complex user interfaces and take action in real time.

This capability extends the model beyond coding into real-world application workflows, where AI systems interact directly with software environments. In these settings, visual understanding, tool use, and response speed must work together to complete tasks reliably.

Benchmark results show GPT-5.4 mini performing strongly on OSWorld-Verified, approaching GPT-5.4 while outperforming GPT-5 mini, supporting its ability to handle computer-use tasks with higher accuracy and speed than earlier mini models.

GPT-5.4 Mini and Nano Availability: API, Codex, ChatGPT, and Pricing

GPT-5.4 mini is available across the API, Codex, and ChatGPT, while GPT-5.4 nano is currently limited to API access.

In the API, GPT-5.4 mini supports text and image inputs, tool use, function calling, web search, file search, computer use, and skills. It includes a 400,000-token context window and is priced at $0.75 per 1 million input tokens and $4.50 per 1 million output tokens.

GPT-5.4 nano is the lowest-cost option in the GPT-5.4 family, priced at $0.20 per 1 million input tokens and $1.25 per 1 million output tokens, making it suitable for high-volume, cost-sensitive workloads.

In Codex, GPT-5.4 mini is available across the app, CLI, IDE extension, and web, allowing developers to use the model within their existing workflows. It uses only 30% of the GPT-5.4 quota, enabling lower-cost handling of simpler coding tasks while reserving more complex reasoning for larger models. Codex can also delegate subagent tasks to GPT-5.4 mini, reinforcing its role in multi-model workflows.

In ChatGPT, GPT-5.4 mini is available to Free and Go users through the “Thinking” feature, and serves as a rate-limit fallback for GPT-5.4 in higher-tier usage—ensuring continued access when demand exceeds limits.

Q&A: GPT-5.4 Mini and Nano for AI Workflows and Developers

Q: What did OpenAI announce?

A: OpenAI launched GPT-5.4 mini and GPT-5.4 nano, two smaller AI models designed for faster, lower-cost coding, subagent, and real-time multimodal workflows.

Q: How do GPT-5.4 mini and nano work together?

A: GPT-5.4 mini and nano are designed to operate within multi-model systems, where larger models handle planning and reasoning, while smaller models execute tasks quickly in parallel—reducing latency and cost across workflows.

Q: What is the main idea behind this release?

A: The goal is to keep AI work within a single model family, allowing developers to combine models of different sizes for planning and execution instead of switching between separate systems.

Q: Why does GPT-5.4 mini matter more than a typical “mini” model?

A: GPT-5.4 mini delivers strong performance across coding, tool use, and agentic workflows while running faster and at lower cost, making it a practical production model rather than just a lightweight alternative.

Q: What is GPT-5.4 nano designed for?

A: GPT-5.4 nano is optimized for high-volume, lower-complexity tasks such as classification, data extraction, ranking, and lightweight coding support within larger workflows.

What This Means: GPT-5.4 Mini and Nano Enable Faster, Multi-Model AI Workflows

This release points to a more practical way of building AI products, where different models handle different parts of the same workflow instead of forcing one model to do everything.

The key point: OpenAI is redefining smaller models as fast execution layers that handle real work—like coding, tool use, and interface interaction—rather than treating them as limited backups.

Who Should Care: If you are a developer, product builder, or enterprise team, this matters because building useful AI products now depends not just on model intelligence, but on how well your system can plan, take action, respond quickly, and manage cost across multiple steps.

Why This Matters: This matters now because AI is showing up inside real products, where speed, consistency, and cost directly affect whether people actually use it—not just how advanced the model is.

What Decision This Affects: The decision this affects is how teams design their workflows—whether to keep using smaller models as limited fallback options, or start treating them as core tools for fast, repeatable tasks like coding, subagents, and computer use.

In short: GPT-5.4 mini and nano show that useful AI systems are built by combining fast, efficient models with more powerful ones, not by relying on a single model to do everything.

The teams that win in AI won’t be the ones with the smartest models—they’ll be the ones whose systems actually work fast enough for people to use every day.

Sources:

OpenAI - Introducing GPT-5.4 Mini and Nano

https://openai.com/index/introducing-gpt-5-4-mini-and-nano/OpenAI Developers - Codex Subagents

https://developers.openai.com/codex/subagentsOpenAI Deployment Safety - GPT-5.4 Thinking Research Category Update: Sandbagging

https://deploymentsafety.openai.com/gpt-5-4-thinking/research-category-update-sandbagging

Editor’s Note: This article was created by Alicia Shapiro, CMO of AiNews.com, with writing, image, and idea-generation support from ChatGPT, an AI assistant. However, the final perspective and editorial choices are solely Alicia Shapiro’s. Special thanks to ChatGPT for assistance with research and editorial support in crafting this article.