An AI agent interacts with spreadsheets, software tools, and code across multiple screens—illustrating the workplace automation capabilities OpenAI describes in GPT-5.4. Image Source: ChatGPT - 5.2

OpenAI Launches GPT-5.4 for Professional Work, AI Agents, and Coding Automation

OpenAI has launched its new GPT-5.4 frontier AI model, designed for professional knowledge work, coding, and AI agent workflows, rolling out across ChatGPT, the OpenAI API, and Codex alongside a higher-performance GPT-5.4 Pro version for more demanding tasks. In ChatGPT, the model appears as GPT-5.4 Thinking, replacing GPT-5.2 Thinking for paid users.

The release matters because GPT-5.4 is designed not only for conversation, but for executing complex workplace tasks involving documents, spreadsheets, software tools, and multi-step workflows. According to the company, the model combines advances in reasoning, software engineering, computer use, tool orchestration, and long-context processing into one system intended to complete more real work with less back-and-forth.

OpenAI says GPT-5.4 improves performance on tasks involving spreadsheets, presentations, documents, browser workflows, and external tools, while also introducing native computer-use capabilities for developers building AI agents. The company says the model is designed to complete complex tasks more reliably in a single response, reducing the need for repeated prompts or revisions. The model is also more token-efficient than GPT-5.2, which OpenAI says can reduce cost and latency on complex tasks.

The release affects a wide range of users, including ChatGPT subscribers, software developers, enterprise teams, legal professionals, financial professionals, and companies building AI-powered agents into workplace software.

Here’s what this means for the broader market: OpenAI is continuing to push its mainline models beyond chatbot interactions and toward practical workplace automation, where accuracy, tool use, and software execution matter as much as raw reasoning performance.

Key Takeaways: OpenAI’s GPT-5.4 release for professional work and AI agents

OpenAI has launched GPT-5.4 and GPT-5.4 Pro across ChatGPT, the OpenAI API, and Codex as new models for professional work and advanced AI workflows.

GPT-5.4 combines reasoning, coding, computer use, and tool orchestration into a single frontier model designed for real-world business tasks and developer workflows.

OpenAI says GPT-5.4 improves performance on knowledge work benchmarks, including tasks involving spreadsheets, presentations, documents, legal analysis, and financial workflows.

GPT-5.4 is OpenAI’s first general-purpose model with native computer-use capabilities, allowing AI agents to interact with software systems through screenshots, browser interfaces, keyboard input, and mouse actions.

The model also introduces tool search in the OpenAI API, helping developers manage large tool ecosystems more efficiently while reducing token usage and preserving context.

OpenAI says GPT-5.4 is more token-efficient than GPT-5.2, which could lower cost and improve speed for long-horizon reasoning, coding, and agent-based automation.

The release expands OpenAI’s push into workplace automation, where AI systems are expected to complete multi-step operational tasks rather than only generate answers.

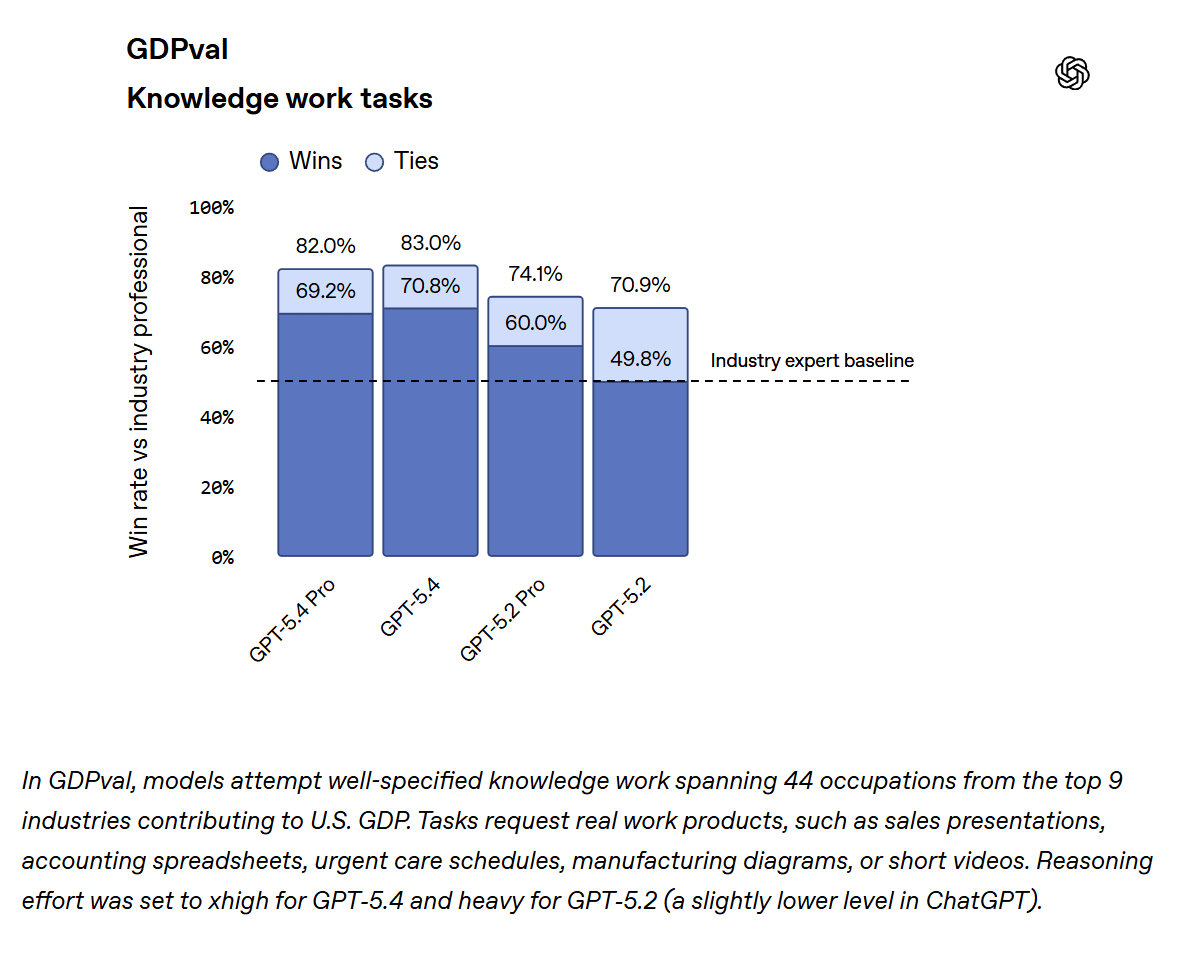

GPT-5.4 benchmark gains in professional knowledge work

OpenAI reports that GPT-5.4 delivers stronger results on benchmarks designed to measure real-world professional tasks.

On GDPval, a benchmark that evaluates knowledge work across 44 occupations spanning nine major industries, GPT-5.4 matches or exceeds industry professionals in 83.0% of comparisons, compared with 70.9% for GPT-5.2.

The benchmark measures tasks such as:

building sales presentations

constructing financial spreadsheets

creating operational schedules

producing technical diagrams and reports

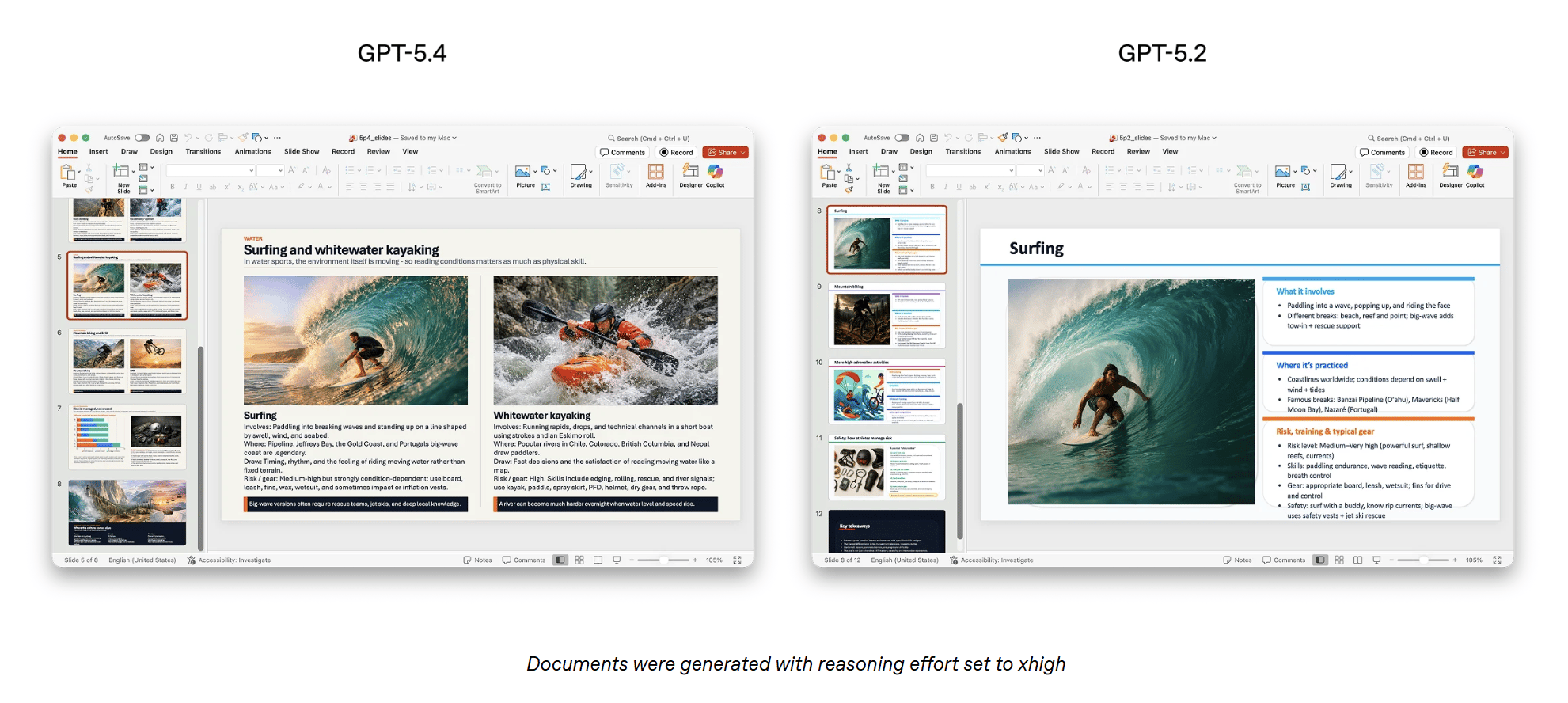

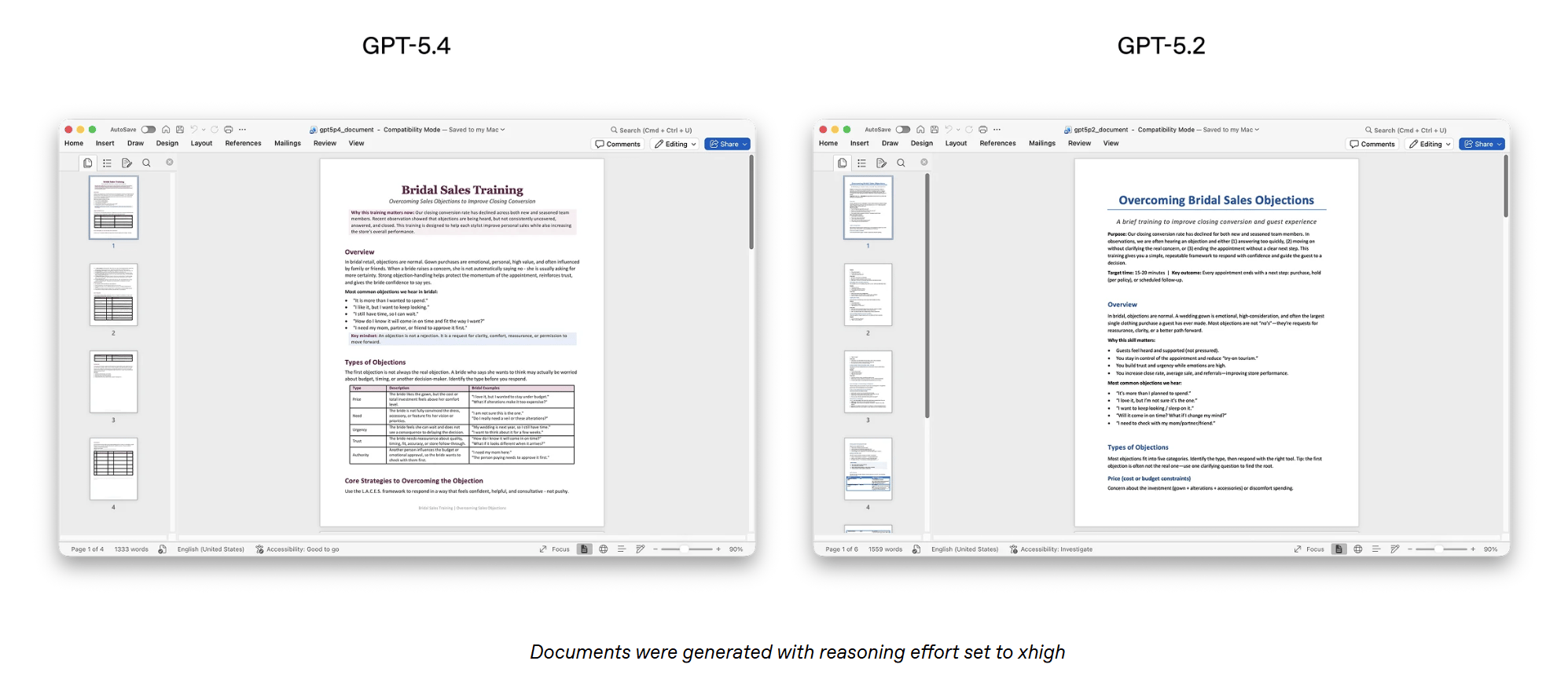

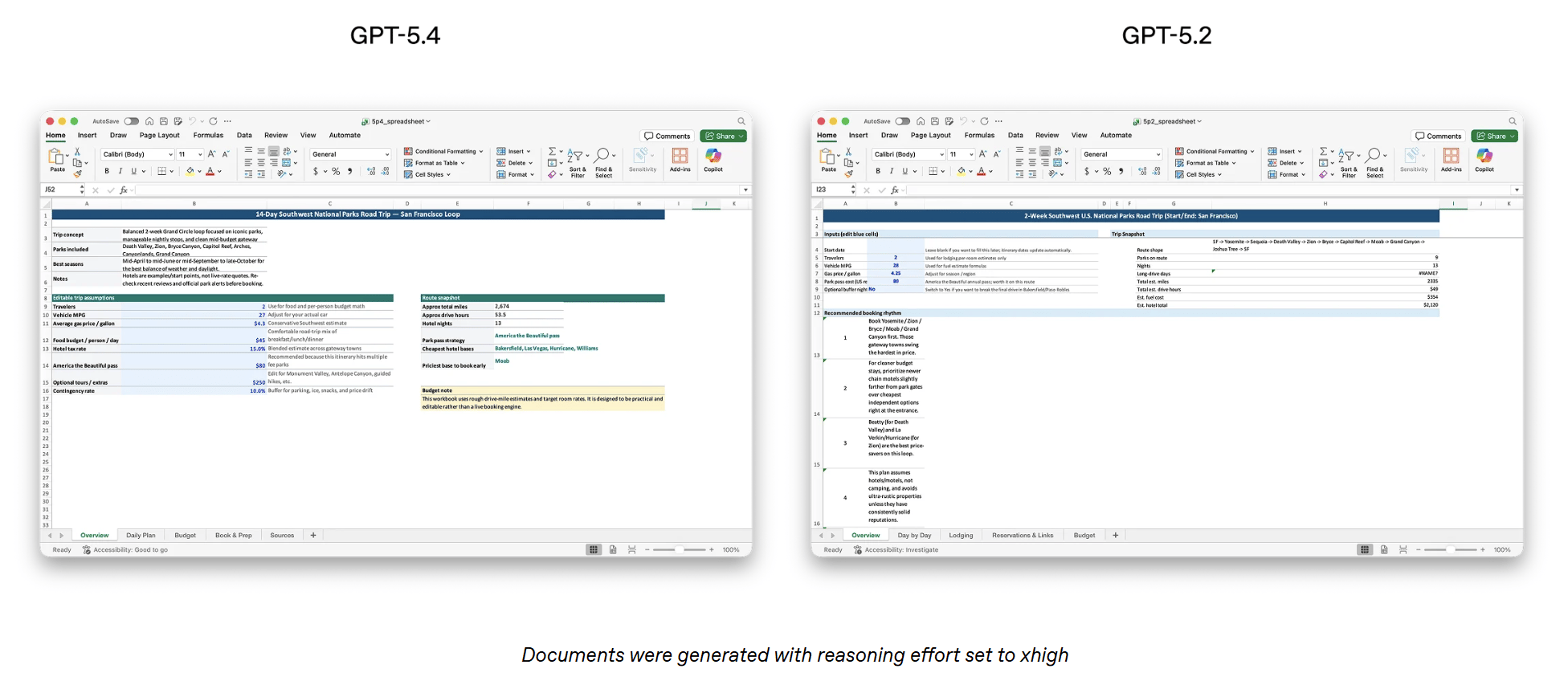

OpenAI also focused on improving GPT-5.4’s ability to handle common workplace tasks involving spreadsheets, presentations, and documents. On an internal benchmark measuring spreadsheet modeling tasks similar to those performed by junior investment banking analysts, OpenAI reports that GPT-5.4 achieved a mean score of 87.3%, compared with 68.4% for GPT-5.2.

In a separate set of presentation evaluation prompts, human reviewers preferred presentations generated by GPT-5.4 68% of the time over those produced by GPT-5.2, citing stronger visual design, greater visual variety, and more effective use of generated images.

Several companies testing GPT-5.4 in professional workflows report improvements in complex analytical tasks and document-heavy work.

“GPT-5.4 is the best model we’ve ever tried. It’s now top of the leaderboard on our APEX-Agents benchmark, which measures model performance for professional services work. It excels at creating long-horizon deliverables such as slide decks, financial models, and legal analysis, delivering top performance while running faster and at a lower cost than competitive frontier models.”

— Brendan Foody, CEO, Mercor

“On our toughest internal finance and Excel evaluations, GPT-5.4 outperformed prior models, improving accuracy by 30 percentage points. This step change in reliability materially expands our automation of model updates and scenario analyses for fundamental investors.”

— Daniel Swiecki, Head of Current.AI Solutions, Walleye Capital

“GPT-5.4 performed extremely well in our financial analysis workflows, which is why we’re adding it to a new model picker inside of Shortcut. On our most challenging evaluation suite, GPT-5.4 (low-effort) matches Opus 4.6 (low-effort) in accuracy while achieving 22% greater token efficiency, resulting in 42% lower cost per task.”

— Nico Christie, Co-Founder & CPO, Fundamental Research Labs

OpenAI says these capabilities are available in ChatGPT through GPT-5.4 Thinking and GPT-5.4 Pro. For enterprise users, the company also introduced a new ChatGPT for Excel add-in designed to support spreadsheet workflows. OpenAI says updated spreadsheet tools and presentation tools are also available in Codex and through the OpenAI API.

OpenAI also says GPT-5.4 improves reliability and factual accuracy. On a set of de-identified prompts where users previously flagged factual errors, the company reports that GPT-5.4 produced:

33% fewer false individual claims

18% fewer responses containing any factual errors

compared with GPT-5.2.

The model also performed strongly in specialized professional evaluations. For example, Harvey’s BigLaw Bench, a benchmark for legal document analysis, reported a 91% score for GPT-5.4 in internal testing.

“GPT-5.4 sets a new bar for document-heavy legal work. On our BigLaw Bench eval, it scored 91%. Compared to other models, GPT-5.4 is currently better at structuring complex transactional analysis, maintaining accuracy across lengthy contracts, and delivering the high level of detail legal practitioners require.”

— Niko Grupen, Head of Applied Research, Harvey

“GPT-5.4 delivered consistent gains across the board in benchmarks, including legal reasoning, hallucination detection, and other core capabilities. We observed marked improvements in long context tasks, which helps make the agentic systems we are building more reliable at scale."

— Joel Hron, CTO, Thomson Reuters

GPT-5.4 native computer-use capabilities for AI agents

OpenAI says GPT-5.4 is its first general-purpose model to include native computer-use capabilities, allowing AI agents to interact directly with desktop software, websites, and other digital interfaces, while giving developers new ways to build and guide those agents across software workflows.

The model can:

generate code to operate computers using libraries such as Playwright

control mouse and keyboard actions

analyze screenshots of software environments

carry out multi-step tasks across applications

OpenAI says the model’s behavior can also be controlled through developer instructions, allowing teams to adjust how agents operate for different use cases. Developers can configure safety settings as well, including confirmation policies that determine when the model should pause and request approval before completing higher-risk actions.

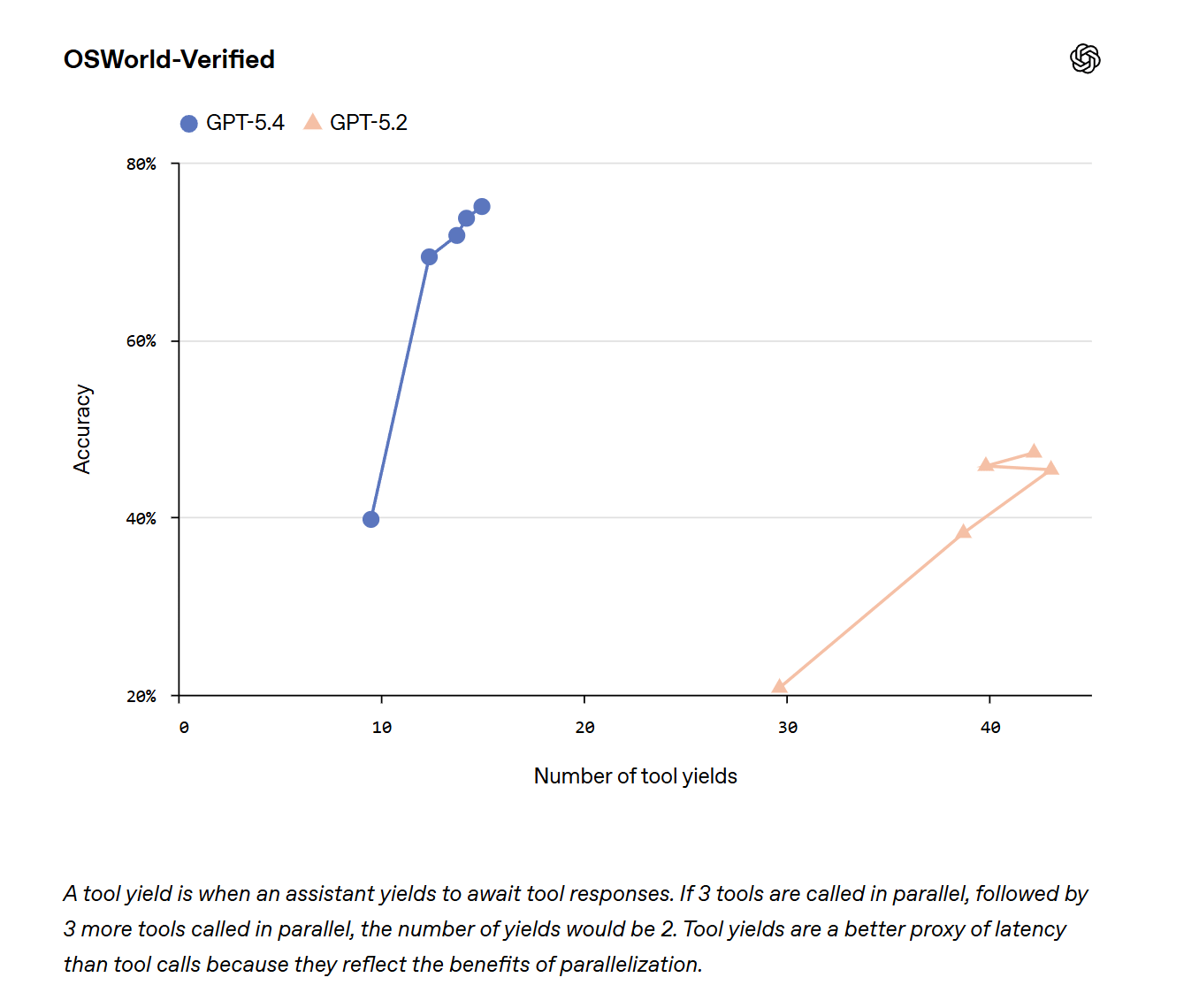

On OSWorld-Verified, a benchmark that measures an AI agent’s ability to operate a desktop environment using screenshots and keyboard or mouse commands, GPT-5.4 achieved a 75.0% success rate, compared with 47.3% for GPT-5.2 and slightly exceeding reported human performance of 72.4%.

The model also improved results on browser-based agent tasks:

WebArena-Verified: GPT-5.4 achieved a 67.3% success rate when using both DOM-driven interaction and screenshot-driven interaction, compared with 65.4% for GPT-5.2.

Online-Mind2Web: GPT-5.4 achieved a 92.8% success rate using screenshot-based observations alone, improving over ChatGPT Atlas’s Agent Mode, which achieved a 70.9% success rate.

OpenAI says GPT-5.4’s improved computer-use performance is supported by stronger visual perception capabilities, allowing the model to better interpret screenshots, documents, and interface elements when navigating software environments.

On MMMU-Pro, a benchmark that measures visual understanding and reasoning, GPT-5.4 achieved an 81.2% success rate without tool use, compared with 79.5% for GPT-5.2.

The company says these improvements also enhance document parsing. On OmniDocBench, which evaluates how accurately models extract information from documents, GPT-5.4 achieved an average error score of 0.109, compared with 0.140 for GPT-5.2.

OpenAI also introduced higher-fidelity image input options for developers. GPT-5.4 now supports an “original” image detail level capable of processing images up to 10.24 million pixels or a 6000-pixel maximum dimension, while the high-detail setting supports images up to 2.56 million pixels or 2048 pixels.

According to OpenAI, early API testing shows these higher-resolution modes improve image localization, visual understanding, and click accuracy when models interact with screenshots and interface elements.

Companies testing GPT-5.4 in real-world computer-use environments also report significant performance improvements.

“On Pace’s most complex computer-use tasks, GPT-5.4 increased performance from 52% to 93%, achieving state-of-the-art results and delivering a dramatic uplift over prior models. In the regulated insurance industry, that gain is critical to reliably running thousands of mission-critical insurance operations every day.”

— Jamie Cuffe, Founder, Pace

In the OpenAI API, OpenAI says developers can access these capabilities through an updated computer tool designed to help agents interact with software environments. The company has also published updated documentation outlining recommended implementation practices.

GPT-5.4 coding performance and developer workflow improvements

GPT-5.4 combines the coding capabilities introduced with GPT-5.3-Codex with broader reasoning and agent workflows, allowing the model to handle longer software engineering tasks that involve tools, iteration, and multi-step execution with less manual intervention.

On SWE-Bench Pro, a benchmark that evaluates the ability to solve real GitHub issues, GPT-5.4 matches or exceeds the performance of GPT-5.3-Codex while operating at lower latency depending on reasoning settings.

OpenAI says GPT-5.4 also improves complex frontend development tasks, producing more functional and more visually refined results than previous models in internal testing.

In Codex, users can enable a /fast mode option that increases token generation speed by up to 1.5×, allowing developers to iterate more quickly when writing, testing, and debugging code. OpenAI says developers can access similar performance improvements in the OpenAI API by enabling priority processing, which increases processing speed for time-sensitive workloads.

As part of the release, OpenAI is also introducing an experimental Codex capability called “Playwright (Interactive)”, designed to demonstrate GPT-5.4’s combined coding and computer-use abilities. The tool allows Codex to visually debug web applications and Electron applications and even test an application while it is being built.

Early adopters testing GPT-5.4 in development environments report improvements in reliability and workflow automation.

“We made GPT-5.4 the default model in Augment’s agentic development environment, Intent, because of its consistent follow-through and reliable tool use powering multi-agent workflows end-to-end. GPT-5.4 is built for this moment in AI: long-horizon reasoning, better efficiency, and, crucially, agent orchestration.”

— Guy Gur-Ari, Co-Founder, Augment Code

“GPT-5.4 is currently the leader on our internal benchmarks. Our engineers find it to be more natural and assertive than previous models. It works through ambiguous problems without second-guessing itself, and it's proactive about parallelizing work to keep things moving.”

— Lee Robinson, VP of Developer Education, Cursor

GPT-5.4 tool ecosystems, tool search, and agentic web browsing

GPT-5.4 introduces improvements in how models discover and use external tools, enabling AI agents to operate across larger tool ecosystems, select the appropriate tools more reliably, and complete multi-step workflows with lower cost and latency.

One key feature is tool search, which allows the model to retrieve tool definitions only when needed instead of loading them all into the prompt. Previously, systems with large tool libraries often had to include thousands—or even tens of thousands—of tokens of tool definitions in every request, increasing cost and slowing responses.

With tool search, GPT-5.4 receives a lightweight list of available tools and can look up full tool definitions only when necessary. OpenAI says this approach reduces token usage, speeds up requests, and allows agents to work reliably with much larger tool ecosystems. This becomes especially important for systems using large tool libraries—such as MCP servers—that may contain tens of thousands of tokens of tool definitions.

To demonstrate the efficiency gains, OpenAI evaluated 250 tasks from Scale’s MCP Atlas benchmark using all 36 MCP servers in two configurations: one where every MCP function was loaded directly into the model’s context, and another where the servers were accessed through tool search.

According to the company, the tool-search configuration reduced total token usage by 47% while maintaining the same accuracy.

GPT-5.4 also improves tool calling, making the model more accurate and efficient when deciding when and how to use tools during reasoning, particularly in the OpenAI API. On Toolathlon, a benchmark measuring how well AI agents combine APIs and software tools to complete multi-step workflows, GPT-5.4 achieved higher accuracy in fewer reasoning turns than GPT-5.2. For example, a typical task might require an agent to read emails, extract assignment attachments, upload files, grade them, and record results in a spreadsheet.

OpenAI says these improvements also benefit latency-sensitive applications. When models run with reasoning effort set to None, GPT-5.4 still shows stronger performance than previous models, improving efficiency for real-time systems.

Agentic web browsing improvements

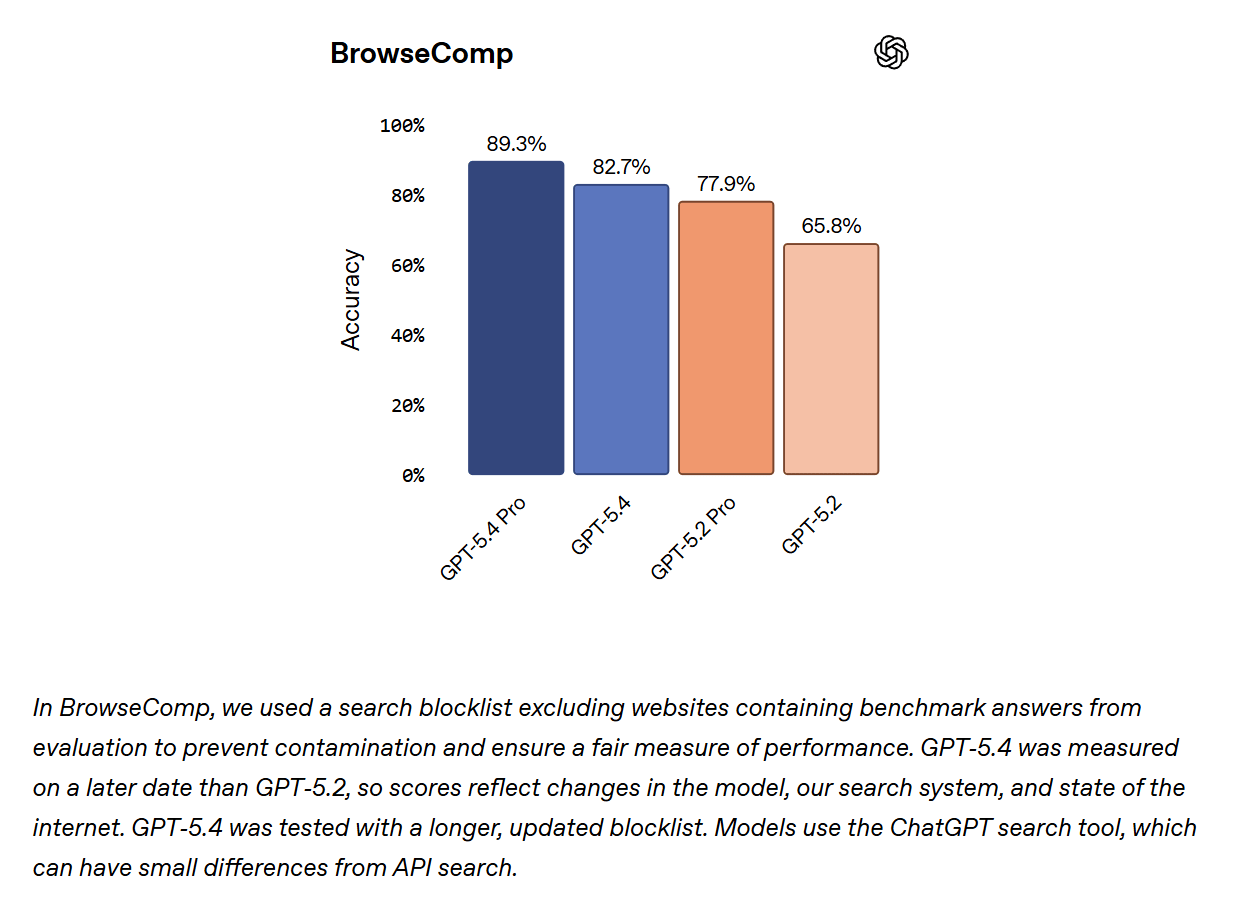

The model also improves agentic web browsing. On BrowseComp, a benchmark measuring how well AI agents search the web to locate difficult information, GPT-5.4 improved performance by 17 percentage points over GPT-5.2, while GPT-5.4 Pro achieved 89.3% accuracy, setting a new state-of-the-art result.

OpenAI says these improvements allow GPT-5.4 Thinking to persist across multiple rounds of web search, gather information from many sources, and synthesize results into more complete answers, particularly for complex or hard-to-find queries.

Companies building agent-powered workflows say these improvements in tool use and data retrieval are already changing how AI systems perform complex operational tasks.

“GPT-5.4 xhigh is the new state of the art for multi-step tool use. Zapier runs some of the most rigorous tool use benchmarks in the industry, testing models across hundreds of advanced real-world workflows. GPT-5.4 finished the job where previous models gave up — the most persistent model to date.”

— Wade, CEO, Zapier

“GPT-5.4 is dramatically faster and more efficient at agentic data retrieval than any other model we’ve used. It's able to reason across multiple data sources, files, and other context to do GTM research that helps teams close deals. Its writing and personality are much more natural, making GPT-5.4 a great fit for our conversational agents.”

— Varun Anand, Co-founder, Clay

"On OfficeQA-Pro, our signature benchmark for grounded reasoning over complex enterprise documents, GPT-5.4 set a new performance bar. GPT-5.4-powered agents delivered significantly higher accuracy than prior generations and ran about 2× faster than other frontier models, underscoring strong tool use and multi-step reasoning in grounded workflows."

— Hanlin Tang, CTO of Neural Networks, Databricks

GPT-5.4 Thinking upgrades in ChatGPT steerability and long-context reasoning

In ChatGPT, GPT-5.4 Thinking can outline a short plan before completing longer or more complex tasks, similar to how Codex explains its approach when starting work. Users can adjust instructions or redirect the model mid-response, making it easier to guide the output without restarting the conversation or issuing multiple follow-up prompts.

OpenAI says this feature is available now on chatgpt.com and the Android app, with iOS support coming soon.

The company also says GPT-5.4 can spend more time reasoning through difficult problems while maintaining stronger awareness of earlier steps in the conversation. This helps the model handle longer workflows and complex prompts while keeping responses coherent and relevant.

These changes are intended to help users complete more complicated tasks with fewer prompt revisions while maintaining clearer reasoning across extended interactions..

GPT-5.4 safety safeguards and High cyber capability classification

OpenAI says GPT-5.4 is classified as High cyber capability under the company’s Preparedness Framework, the same risk tier previously applied to GPT-5.3-Codex. The model is deployed with corresponding safeguards documented in its system card.

According to the company, the expanded security stack includes:

monitoring systems

trusted access controls

automated blocking for higher-risk requests on Zero Data Retention (ZDR) surfaces

OpenAI says these safeguards are designed to mitigate potential misuse of cybersecurity capabilities, which the company describes as inherently dual-use. For certain customers using ZDR environments, request-level blocking remains part of the risk-mitigation system, although the company notes that classifier improvements are ongoing and may still produce occasional false positives.

The company says the latest updates are intended to reduce unnecessary refusals and overly cautious responses while maintaining strong protections against misuse.

OpenAI also continues research on chain-of-thought (CoT) monitorability, which studies how model reasoning can be analyzed to detect potential misbehavior. As part of this work, researchers introduced a new open-source evaluation called CoT controllability, which tests whether a model can intentionally hide or manipulate its reasoning to evade monitoring.

According to OpenAI, GPT-5.4 Thinking shows low ability to deliberately obscure its reasoning, which researchers consider a positive safety signal because it suggests monitoring systems can still reliably observe how the model arrives at conclusions.

GPT-5.4 availability, rollout, and API pricing

GPT-5.4 is rolling out gradually across ChatGPT, the OpenAI API, and Codex starting today.

In ChatGPT:

GPT-5.4 Thinking replaces GPT-5.2 Thinking for Plus, Team, and Pro users.

GPT-5.4 Pro is available for Pro and Enterprise plans.

GPT-5.2 Thinking will remain available as a Legacy Model for three months before being retired on June 5, 2026.

Enterprise and Edu customers can enable early access through admin settings.

Context window limits remain unchanged from GPT-5.2 Thinking.

In the API:

GPT-5.4 is available as gpt-5.4, with a higher-performance gpt-5.4-pro version for complex workloads.

OpenAI says GPT-5.4 is priced higher per token than GPT-5.2 but is designed to complete many tasks using fewer tokens, which can reduce overall cost.

The API also supports multiple pricing modes, including:

Batch and Flex processing, which run at about half the standard API price

Priority processing, which runs at double the standard rate for faster responses

In Codex:

GPT-5.4 includes experimental support for a 1 million token context window, allowing developers to run longer workflows. Requests exceeding the standard 272K context window count against usage limits at twice the normal rate. Developers can try this by configuring model_context_window and model_auto_compact_token_limit.

Example API pricing includes:

Q&A: GPT-5.4 release and capabilities

Q: What is GPT-5.4?

A: GPT-5.4 is OpenAI’s newest frontier model for professional knowledge work, coding, AI agents, and multi-step software workflows. It is being rolled out across ChatGPT, the OpenAI API, and Codex.

Q: What did OpenAI announce with this release?

A: OpenAI announced the launch of GPT-5.4 and GPT-5.4 Pro, along with new capabilities including stronger reasoning, improved coding performance, native computer use, better tool orchestration, tool search in the API, and continued support for long-context workflows.

Q: Where is GPT-5.4 available?

A: In ChatGPT, GPT-5.4 appears as GPT-5.4 Thinking for paid users, while GPT-5.4 Pro is available on higher-tier plans. In the API, developers can access the model as gpt-5.4 and gpt-5.4-pro. The model is also rolling out in Codex.

Q: What are the biggest new capabilities in GPT-5.4?

A: OpenAI says the biggest additions are stronger professional knowledge work performance, native computer-use capabilities, improved coding and frontend development, more efficient tool use and tool search, better agentic web browsing, and stronger long-context reasoning across complex workflows.

Q: How does GPT-5.4 differ from GPT-5.2?

A: According to OpenAI, GPT-5.4 improves on GPT-5.2 in benchmark performance, factual reliability, computer use, web browsing, and tool-based task completion. OpenAI also says the model is more token-efficient, which can reduce cost and latency on longer or more complex tasks.

Q: Why does GPT-5.4 matter for developers and businesses?

A: The release matters because it pushes OpenAI’s mainline models further into real workplace execution. Instead of only generating responses, GPT-5.4 is designed to help AI agents navigate software, use tools, work across documents, and complete multi-step operational tasks more reliably.

Q: What should users watch most closely with GPT-5.4?

A: The most important question is whether GPT-5.4’s gains in computer use, tool orchestration, and knowledge work reliability translate into practical automation inside real business environments. That is where the model’s broader commercial impact is most likely to be measured.

What This Means: GPT-5.4 pushes OpenAI deeper into workplace automation

GPT-5.4 is not just a model upgrade; it is part of OpenAI’s broader effort to make its mainline AI systems more useful for real professional work across software, documents, and multi-step business tasks. The announcement centers less on chatbot novelty and more on execution: handling spreadsheets, navigating interfaces, using tools, and completing workflows with greater reliability.

Who should care: Developers, enterprise software teams, operations leaders, legal professionals, financial professionals, and companies building AI agents into workplace systems should pay close attention. These are the users most likely to benefit if GPT-5.4’s gains in tool use, computer interaction, and long-context task execution hold up in production environments.

Why this matters now: The competitive frontier in AI is moving beyond who has the smartest model in isolation. It is increasingly about which AI systems can actually operate inside the digital environments where work happens—browsers, documents, spreadsheets, internal tools, and business software. GPT-5.4 is OpenAI’s clearest attempt yet to strengthen that bridge between model intelligence and workplace execution.

Another notable shift in this release is OpenAI’s focus on tool orchestration and large tool ecosystems. Features such as tool search allow AI agents to discover and load tools dynamically rather than receiving every tool definition upfront. This approach reduces token overhead and enables agents to operate across much larger tool environments, including MCP servers that can contain many integrations and APIs. For developers building autonomous workflows, this design moves AI systems closer to acting as coordinators of software tools rather than just generators of answers.

What decision this affects: For companies evaluating AI copilots, enterprise automation tools, agent platforms, or developer workflows, the key decision is no longer just whether a model can generate strong text or code. It is whether the model can be trusted to complete longer, more operational tasks with acceptable accuracy, cost, and speed.

The bigger question now is not whether AI can assist knowledge workers, but which models are becoming reliable enough to start doing meaningful portions of the work itself.

Sources:

OpenAI - Introducing GPT-5.4

https://openai.com/index/introducing-gpt-5-4/OpenAI - GPT-5.4 Thinking System Card

https://openai.com/index/gpt-5-4-thinking-system-card/OpenAI Developers - Latest Model Guide

https://developers.openai.com/api/docs/guides/latest-modelOpenAI - GDPval Benchmark Overview

https://openai.com/index/gdpval/OpenAI - ChatGPT Spreadsheets App

https://chatgpt.com/apps/spreadsheets/?openaicom_referred=trueOpenAI GitHub - Spreadsheet Skill

https://github.com/openai/skills/tree/main/skills/.curated/spreadsheetOpenAI GitHub - Slides Skill

https://github.com/openai/skills/tree/main/skills/.curated/slidesOpenAI GitHub - Playwright (Interactive) Skill

https://github.com/openai/skills/tree/main/skills/.curated/playwright-interactiveOpenAI Developers - Tool Search Guide

https://developers.openai.com/api/docs/guides/tools-tool-searchScale AI - MCP Atlas Benchmark Leaderboard

https://scale.com/leaderboard/mcp_atlasOpenAI - Chain-of-Thought Controllability Research

https://openai.com/index/reasoning-models-chain-of-thought-controllability/

Editor’s Note: This article was created by Alicia Shapiro, CMO of AiNews.com, with writing, image, and idea-generation support from ChatGPT, an AI assistant. However, the final perspective and editorial choices are solely Alicia Shapiro’s. Special thanks to ChatGPT for assistance with research and editorial support in crafting this article.