A visualization of how TurboQuant transforms overloaded GPU memory into a more efficient, scalable system—reducing costs while improving AI performance. Image Source: DALL·E via ChatGPT (OpenAI)

Google TurboQuant Slashes AI Memory Costs 6x Without Retraining, Enabling Cheaper and Faster AI Deployment

Google Research has introduced TurboQuant, a compression algorithm that reduces the memory requirements of large language models (LLMs) by more than 6x without retraining or accuracy loss—addressing one of the most expensive bottlenecks in AI infrastructure.

For organizations deploying AI at scale, a key question is emerging: are current cost and performance limits truly hardware constraints—or solvable engineering problems?

TurboQuant targets key-value (KV) cache memory, the high-speed storage layer AI models use to retain context during processing. As models handle longer and more complex tasks, this cache grows rapidly, increasing GPU memory usage, slowing inference, and raising operating costs.

The breakthrough is particularly relevant for AI infrastructure teams, enterprise technology leaders, and product teams evaluating large-scale deployments or on-device AI, where memory constraints often limit feasibility.

In short, TurboQuant compresses the memory AI models rely on by more than 6x—without sacrificing accuracy or requiring retraining—making AI systems significantly cheaper and more scalable to run.

TurboQuant is a runtime compression technique that reduces the memory footprint of AI model context storage while preserving performance, enabling more efficient large-scale and on-device AI deployment.

Key Takeaways: Google TurboQuant Reduces AI Memory Costs and Improves Performance

TurboQuant is a runtime AI compression technique that reduces memory usage by over 6x without retraining—making AI systems cheaper, faster, and easier to deploy at scale.

Google Research developed TurboQuant to reduce KV cache memory, one of the largest cost drivers in AI inference infrastructure

The technique achieves 6x+ memory reduction with no measurable accuracy loss across tasks like question answering, code generation, and summarization

TurboQuant operates at runtime, requiring no retraining or fine-tuning, making it compatible with existing deployed models

On NVIDIA H100 GPUs, 4-bit TurboQuant delivers up to 8x faster attention computation compared to 32-bit baselines

The system compresses KV cache to as low as 3 bits per value, down from standard 32-bit precision. TurboQuant also improves vector search

performance, outperforming methods like PQ and RabbiQ without requiring dataset-specific lookup tablesThe approach is theoretically grounded and near-optimal, meaning further improvements are mathematically limited

The AI Infrastructure Problem: KV Cache Memory and Cost Constraints

When an AI model processes a conversation, a document, or a long reasoning chain, it doesn't recalculate every prior step from scratch. Instead, it stores the results of those computations in a key-value (KV) cache — a fast-access memory layer that allows the model to retrieve prior context instantly. The longer the task, the larger that cache grows.

For organizations running AI at scale, KV cache size is a direct cost driver. Larger caches require more GPU memory, which limits how many simultaneous requests a model can handle, increases hardware requirements, and raises per-query operating costs. Compressing the cache is a known solution, but traditional vector quantization methods — which reduce the numerical precision of stored values — come with a catch: they require storing correction constants alongside every block of compressed data. That overhead adds 1–2 bits per value and partially negates the memory savings.

TurboQuant was built specifically to eliminate that overhead entirely, achieving compression without the correction penalty that has made prior approaches a compromise.

How TurboQuant Works: Compressing AI Memory Without Accuracy Loss

For anyone who remembers the compression breakthrough storyline from the tv show, Silicon Valley, the idea of dramatically shrinking data without losing quality may sound familiar. In practice, Google's approach applies that concept to AI models — reducing memory requirements by over 6x with minimal impact on performance.

TurboQuant achieves its results through 2 algorithms working in sequence, each addressing a different layer of the compression problem.

The first, PolarQuant, addresses the overhead problem at its source. TurboQuant begins by randomly rotating the data vectors — a step that simplifies the data's geometry and makes the compression that follows significantly more effective. Traditional compression stores data using standard coordinates — think of describing a location as "3 blocks east, 4 blocks north." PolarQuant converts those coordinates into polar form: "5 blocks at a 37-degree angle." This reorganization means the boundaries of the data become predictable and fixed. The model no longer needs to calculate and store normalization constants for each data block — the geometry does that work automatically — and the overhead disappears. The real-world impact: PolarQuant captures the core structure of the data using most of the available bits, with no wasted storage on correction terms.

The second algorithm, QJL (Quantized Johnson-Lindenstrauss), handles the small residual error that remains after PolarQuant compression. Using a mathematical technique that preserves the essential relationships between data points while reducing each value to a single sign bit (+1 or -1), QJL functions as a zero-overhead error-checker. It keeps the model's attention scores — the mechanism by which a model determines which parts of its input are relevant and which can be ignored — accurate even after aggressive compression.

The key point: together, PolarQuant and QJL allow TurboQuant to compress KV cache data to as few as 3 bits per value, eliminating memory overhead entirely, while maintaining the model's ability to process long-context tasks without performance degradation. No retraining is required because the compression operates at runtime on the memory layer, not on the model's underlying weights.

TurboQuant Benchmark Results: Performance Across Long-Context AI Tasks

Google Research evaluated TurboQuant, PolarQuant, and QJL across five standard long-context benchmarks — LongBench, Needle In A Haystack, ZeroSCROLLS, RULER, and L-Eval — using open-source models Gemma and Mistral.

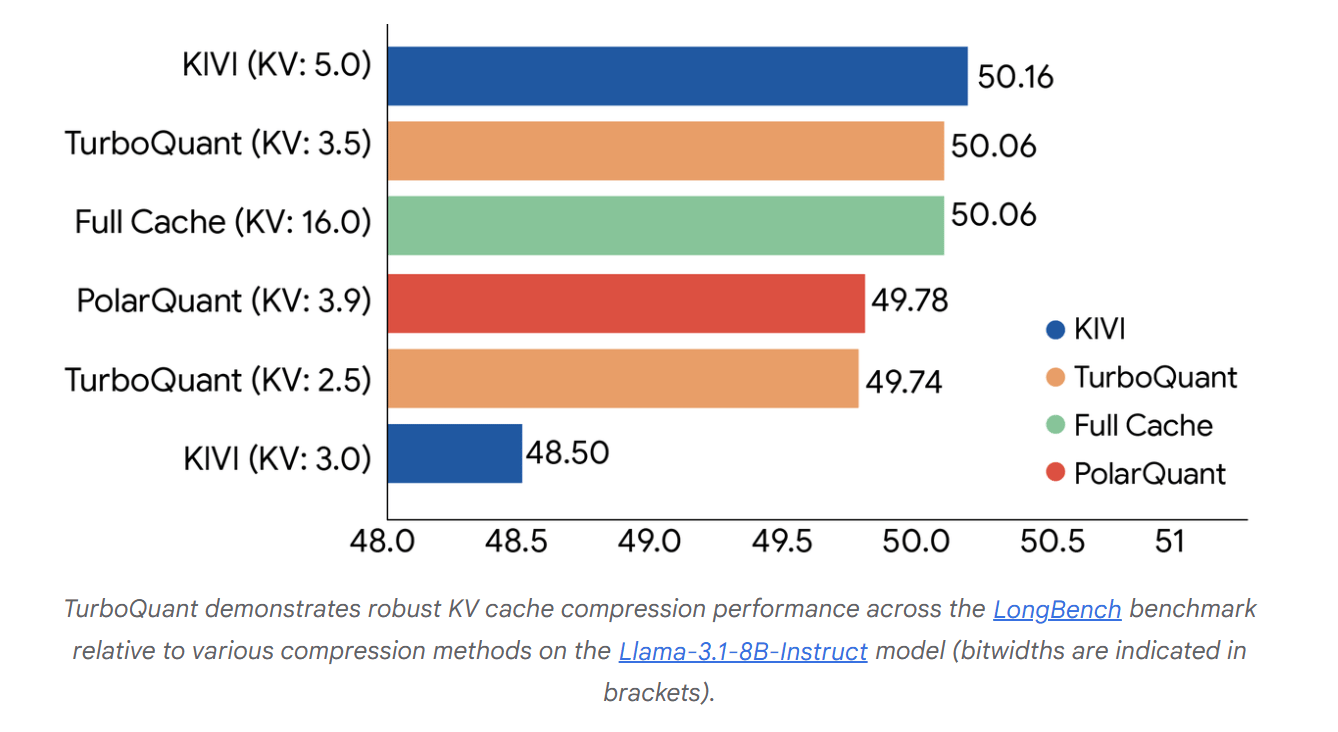

On the LongBench benchmark, which aggregates performance across question answering, code generation, and summarization, TurboQuant achieved optimal scoring while simultaneously reducing KV memory to its lowest footprint. In that same evaluation, TurboQuant outperformed KIVI, the established baseline for KV cache compression, across all tested bit-width configurations.

On Needle In A Haystack tasks — tests that require locating a single specific piece of information buried within a massive body of text — TurboQuant maintained perfect accuracy across all benchmarks while reducing KV cache memory by a factor of at least 6x. PolarQuant performed nearly as well on these tasks independently.

On NVIDIA H100 GPU accelerators, 4-bit TurboQuant delivered up to 8x faster computation of attention logits — the calculations that determine how a model weighs each piece of its input — compared to the uncompressed 32-bit baseline. The runtime overhead of applying TurboQuant is described as negligible.

For vector search — the technology that powers large-scale AI retrieval by finding the most semantically similar items across billions of stored vectors — TurboQuant was evaluated against state-of-the-art methods PQ and RabbiQ using the 1@k recall ratio, a metric measuring how frequently an algorithm correctly identifies the true top result within its top-k approximations. TurboQuant outperformed both baselines despite those methods relying on large, dataset-specific lookup tables that TurboQuant does not require.

What Cheaper AI Memory Means for Enterprise AI and On-Device Deployment

The practical implications of TurboQuant extend well beyond benchmark performance. Reducing KV cache memory by more than 6x changes the economics of running AI at scale in three concrete ways.

First, cost. GPU memory is the primary cost driver in AI inference infrastructure. Less memory consumed per query means more simultaneous requests per hardware unit, lower per-query costs, and the ability to run larger, more capable models on existing hardware without upgrades.

Second, deployment reach. Many AI use cases — on-device AI, edge deployments, and resource-constrained enterprise environments — are currently blocked not by model capability but by memory requirements. Compressing that footprint by more than 6x removes a barrier to adoption that hardware improvements alone would take years to address.

Third, semantic search at scale. Google specifically highlights applications to vector search infrastructure, where the ability to build and query large vector indices with minimal memory and near-zero preprocessing time makes semantic search — finding results by meaning and intent rather than keyword matching — faster and more cost-efficient at Google's scale.

Google notes that TurboQuant has direct applications to its Gemini model family. The research team describes TurboQuant, PolarQuant, and QJL as theoretically grounded contributions, not engineering workarounds — they are provably near-optimal, meaning the room for meaningful further improvement on this specific approach is bounded by mathematics, not iteration.

Q&A: How Google TurboQuant Reduces AI Memory and Costs

Q: What does it mean that TurboQuant compresses AI memory by more than 6x?

A: When an AI model processes long inputs, it stores prior computations in a key-value (KV) cache to avoid recalculating them. TurboQuant reduces that stored memory by more than 6x—meaning significantly lower GPU usage and infrastructure costs without changing model performance.

Q: Why does this matter for organizations deploying AI today?

A: GPU memory is one of the primary cost drivers in AI infrastructure. Reducing memory requirements by 6x while also improving speed directly lowers operating costs, increases system capacity, and makes on-device or edge AI deployments more feasible.

Q: How does TurboQuant achieve compression without losing accuracy?

A: TurboQuant combines two algorithms. PolarQuant restructures data to eliminate overhead from normalization constants, while QJL (Quantized Johnson-Lindenstrauss) preserves relationships between data points using a low-bit representation. Together, they enable near-lossless compression at just 3 bits per value.

Q: What are the real-world applications of TurboQuant?

A: TurboQuant applies to LLM inference, vector search, and semantic retrieval systems, enabling faster processing, lower memory usage, and more scalable AI deployments across enterprise and consumer environments.

Q: Are there limitations or unknowns?

A: The research has been tested on open-source models like Gemma and Mistral, and while results are strong, organizations will need to implement the method from research until production-ready versions are released.

What This Means: Google TurboQuant and the Cost of Running AI

The conversation about AI capability has moved faster than the conversation about AI cost — and for most organizations, cost is now the binding constraint.

The key point: TurboQuant does not make AI smarter. It makes AI dramatically cheaper and faster to run, by solving a memory bottleneck that has been a consistent drag on both performance and economics. A 6x reduction in memory consumption, combined with an 8x speedup in a core processing step, compounds into a meaningful change in what AI infrastructure can realistically support.

Who should care: AI infrastructure teams, enterprise technology leaders, and product teams evaluating on-device or edge AI deployments should treat this as directly relevant. The memory constraints that currently limit deployment options — how many users a system can serve, what hardware is required, whether on-device AI is viable — are exactly what TurboQuant targets.

Why this matters now: The AI industry is in a sustained period of infrastructure pressure. Model capability has outpaced the economics of running those models at scale. Techniques that reduce memory requirements without sacrificing accuracy or requiring retraining are rare and immediately deployable — they do not require waiting for the next hardware generation or model update cycle.

What decision this affects: Organizations evaluating AI infrastructure investments, hardware procurement, and deployment architecture should factor TurboQuant into those decisions. It raises the question of whether current hardware limitations are a real ceiling — or an engineering problem that has just become significantly more solvable.

In short, TurboQuant reduces one of the most expensive constraints in AI infrastructure — memory — making advanced AI systems cheaper, faster, and more practical to deploy at scale.

The gap between what AI can do and what organizations can afford to run it at scale just got a little smaller.

Sources:

Google Research - TurboQuant: Redefining AI Efficiency with Extreme Compression

TurboQuant Technical Paper (arXiv)

https://arxiv.org/abs/2504.19874PolarQuant Technical Paper (arXiv)

https://arxiv.org/abs/2502.02617

Editor’s Note: This article was created by Alicia Shapiro, CMO of AiNews.com, with writing, image, and idea-generation support from Claude, an AI assistant. However, the final perspective and editorial choices are solely Alicia Shapiro’s. Special thanks to Claude for assistance with research and editorial support in crafting this article.