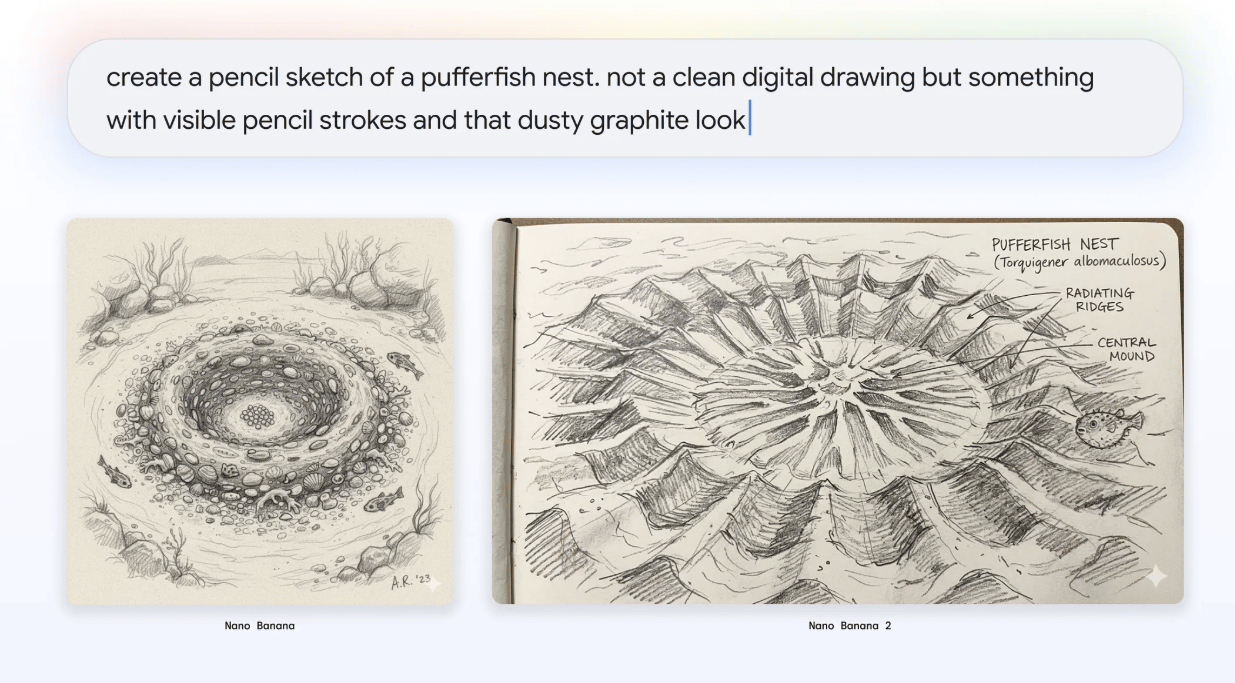

A marketer uses Nano Banana 2 to generate and refine visual content in real time, illustrating how AI image creation is becoming embedded in everyday production workflows. Image Source: ChatGPT-5.2

Google Introduces Nano Banana 2 to Accelerate AI Image Creation Across Marketing, Search, and Cloud Workflows

Google has launched Nano Banana 2, its latest AI image generation model, combining the advanced reasoning and visual quality of its Pro systems with the high-speed performance of Gemini Flash architecture.

The update matters because Google is moving AI image generation from a creative experiment into a real-time production tool embedded across Search, advertising, and enterprise cloud workflows.

Nano Banana 2 — formally known as Gemini 3.1 Flash Image — unifies the advanced world knowledge and reasoning introduced in Nano Banana Pro with Gemini Flash’s high-speed generation architecture. The model integrates real-time knowledge grounding, improved text rendering, and consistent visual outputs while dramatically reducing generation time, enabling rapid iteration on marketing assets, diagrams, infographics, and production-ready visual content.

The rollout affects creators, marketers, developers, and enterprise teams using Google’s ecosystem, as Nano Banana 2 becomes integrated across Gemini, Google Ads, Search, and Vertex AI services.

Here’s what this means for organizations increasingly relying on AI to produce visual content at scale.

Key Takeaways: Google Nano Banana 2 AI Image Model Capabilities and Industry Impact

Google launches Nano Banana 2, merging Pro-level image intelligence with Gemini Flash speed

AI image generation becomes workflow-integrated, expanding across Search, Ads, and enterprise cloud tools

Real-world knowledge grounding enables infographics, diagrams, and data visualizations from prompts

Improved text rendering and localization supports global marketing and multilingual content creation

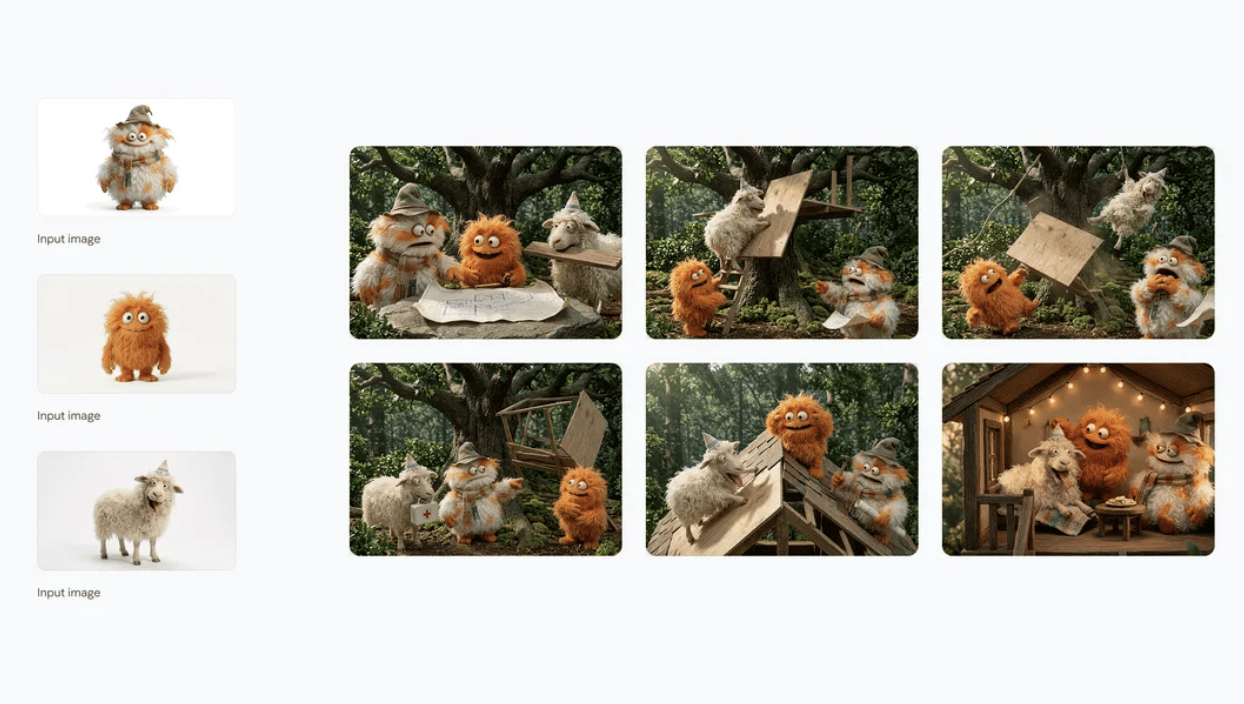

Character and object consistency enables storyboard and campaign continuity workflows

Expanded AI provenance tools strengthen verification using SynthID and C2PA standards

Nano Banana 2 Combines Gemini Flash Speed With Real-Time Knowledge Grounding

Nano Banana 2 represents the next step in Google’s image model lineup.

In August 2025, Google introduced Nano Banana, its Gemini-based image model that gained significant attention for redefining image generation and editing capabilities. In November 2025, the company followed with Nano Banana Pro, adding more advanced intelligence, improved reasoning, and studio-quality creative control aimed at high-fidelity tasks.

Nano Banana 2 brings those Pro-level capabilities into the Gemini Flash architecture, making advanced reasoning and visual control available at high-speed generation performance — narrowing the gap between experimentation and production.

According to Google, the model draws from Gemini’s real-world knowledge base while incorporating real-time web search grounding — meaning it can retrieve and reference up-to-date information at the moment an image is generated — allowing it to render more accurate subjects and produce structured visuals such as:

Infographics

Generate diagrams from notes

Data visualizations

Concept illustrations informed by current information

This approach moves image generation beyond purely stylistic rendering toward reasoning-guided creation, where visual outputs are informed by structured understanding and factual context.

Enhanced Text Rendering and Multilingual Localization for Marketing and Global Content

One of the persistent challenges in AI image generation has been accurate text placement and readability. Google says Nano Banana 2 significantly improves this capability.

The model can now:

Generate clear, legible text within images

Produce marketing mockups and greeting cards with usable typography

Translate and localize text directly inside visuals

This functionality targets global creative workflows where assets must be adapted quickly across languages and markets.

Improved Subject Consistency and Visual Quality for Production-Ready AI Images

Google also focused on narrowing the gap between generation speed and visual quality.

Nano Banana 2 introduces several upgrades:

Subject Consistency: Users can maintain character likeness for up to five characters and preserve visual consistency across up to 14 objects in a single workflow — enabling storyboards and multi-scene narratives without visual drift.

Improved Instruction Following: The model adheres more closely to complex prompts, aiming to capture nuanced creative requests more reliably.

Production-Ready Output: Images can be generated across multiple aspect ratios and resolutions ranging from 512 pixels to 4K, supporting use cases from social media assets to large-format displays.

Higher Visual Quality: Google reports improvements in lighting realism, texture detail, and image sharpness while maintaining Flash-level generation speed.

Nano Banana 2 Rollout Across Gemini, Google Search, Ads, and Vertex AI

Google is rolling Nano Banana 2 out today across its product ecosystem:

Gemini app — replaces Nano Banana Pro across the Fast, Thinking, and Pro models. Google AI Pro and Ultra subscribers will retain access to Nano Banana Pro for specialized high-fidelity tasks via image regeneration options.

Search — integrated into AI Mode and Lens through the Google app as well as mobile and desktop browsers. The rollout expands availability across 141 countries and territories and adds support for eight new languages.

AI Studio & Gemini API — available in preview, with pricing accessible through Google’s developer documentation. Also available within Google Antigravity.

Google Cloud / Vertex AI — available in preview through the Gemini API inside Vertex AI.

Flow — becomes the default image generation model for all Flow users and is available without credit usage.

Google Ads — available now, powering image suggestions during campaign creation.

Google Expands AI Image Provenance With SynthID and C2PA Verification

Alongside capability improvements, Google emphasized continued investment in AI content transparency as generative media becomes more widely used and harder to distinguish from human-created content.

Nano Banana 2 outputs are supported by:

SynthID, Google’s watermarking and verification technology

C2PA Content Credentials, an industry standard designed to work across platforms to verify how AI-generated media was created, not just whether AI was used

Google says SynthID verification within the Gemini app has already been used more than 20 million times across various languages since launching last November, helping users identify AI-generated images, video, and audio.

The company plans to add C2PA verification directly into the Gemini app in a future update, providing additional context about how AI-generated media was created.

Q&A: What Nano Banana 2 Means for AI Image Generation Workflows

Q: What is Nano Banana 2?

A: It is Google’s latest AI image generation model, combining the reasoning and quality of Nano Banana Pro with the speed of Gemini Flash.

Q: What makes it different from earlier versions?

A: Faster generation, stronger real-world knowledge grounding, improved text rendering, and better consistency across characters and objects.

Q: Who is this designed for?

A: Creators, marketers, developers, and enterprises needing rapid iteration with production-ready visuals.

Q: Is Nano Banana Pro still available?

A: Yes. Google AI Pro and Ultra subscribers can still access it for specialized high-fidelity tasks.

Q: Why is Google emphasizing speed alongside image quality?

A: Faster generation enables AI images to be used inside real production workflows — including marketing campaigns, search experiences, and enterprise applications — rather than as standalone creative tools.

What This Means: AI Image Creation Moves Toward Real-Time Production Workflows

As AI image models become embedded into everyday software platforms, updates like Nano Banana 2 illustrate how visual content creation is shifting toward real-time, workflow-integrated production.

Who should care: If you are a creator, marketer, or enterprise team producing visual content, this update matters because image generation is increasingly becoming a real-time production tool rather than a standalone creative experiment.

Why it matters now: This matters now as major platforms integrate AI creation directly into search, advertising, and cloud infrastructure, reducing the time between idea, iteration, and deployment from hours or days to minutes. By combining faster generation with grounded real-world knowledge, Nano Banana 2 narrows the gap between ideation and usable output — allowing teams to move from concept to production without separate research, drafting, and design cycles.

What decision this affects: The decision this affects is how organizations structure creative workflows — whether to continue relying on traditional multi-step design pipelines or begin integrating AI-driven visual production into everyday operations.

As AI image models combine speed with grounded reasoning, the competitive advantage may no longer come from generating images — but from how quickly ideas can move from prompt to publish.

Sources:

Google Blog - Introducing Nano Banana 2

https://blog.google/innovation-and-ai/technology/ai/nano-banana-2/Google AI for Developers - Gemini API Pricing: Gemini 3.1 Flash Image Preview

https://ai.google.dev/gemini-api/docs/pricing#gemini-3.1-flash-image-previewGoogle Support - Gemini App Help: Image Generation and Editing

https://support.google.com/gemini/answer/14286560Google Search Help - AI Mode and Lens Information

https://support.google.com/websearch/answer/16649374Google DeepMind - SynthID

https://deepmind.google/models/synthid/C2PA (Coalition for Content Provenance and Authenticity) - C2PA Content Credentials

https://c2pa.org/Google Blog - AI Image Verification in the Gemini App

https://blog.google/innovation-and-ai/products/ai-image-verification-gemini-app/Google Blog - Updated Image Editing Model in Gemini

https://blog.google/products-and-platforms/products/gemini/updated-image-editing-model/Google Blog - Nano Banana and Google Trends 2025

https://blog.google/products-and-platforms/products/gemini/nano-banana-google-trends-2025/Google Blog - Introducing Nano Banana Pro

https://blog.google/innovation-and-ai/products/nano-banana-pro/

Editor’s Note: This article was created by Alicia Shapiro, CMO of AiNews.com, with writing, image, and idea-generation support from ChatGPT, an AI assistant. However, the final perspective and editorial choices are solely Alicia Shapiro’s. Special thanks to ChatGPT for assistance with research and editorial support in crafting this article.