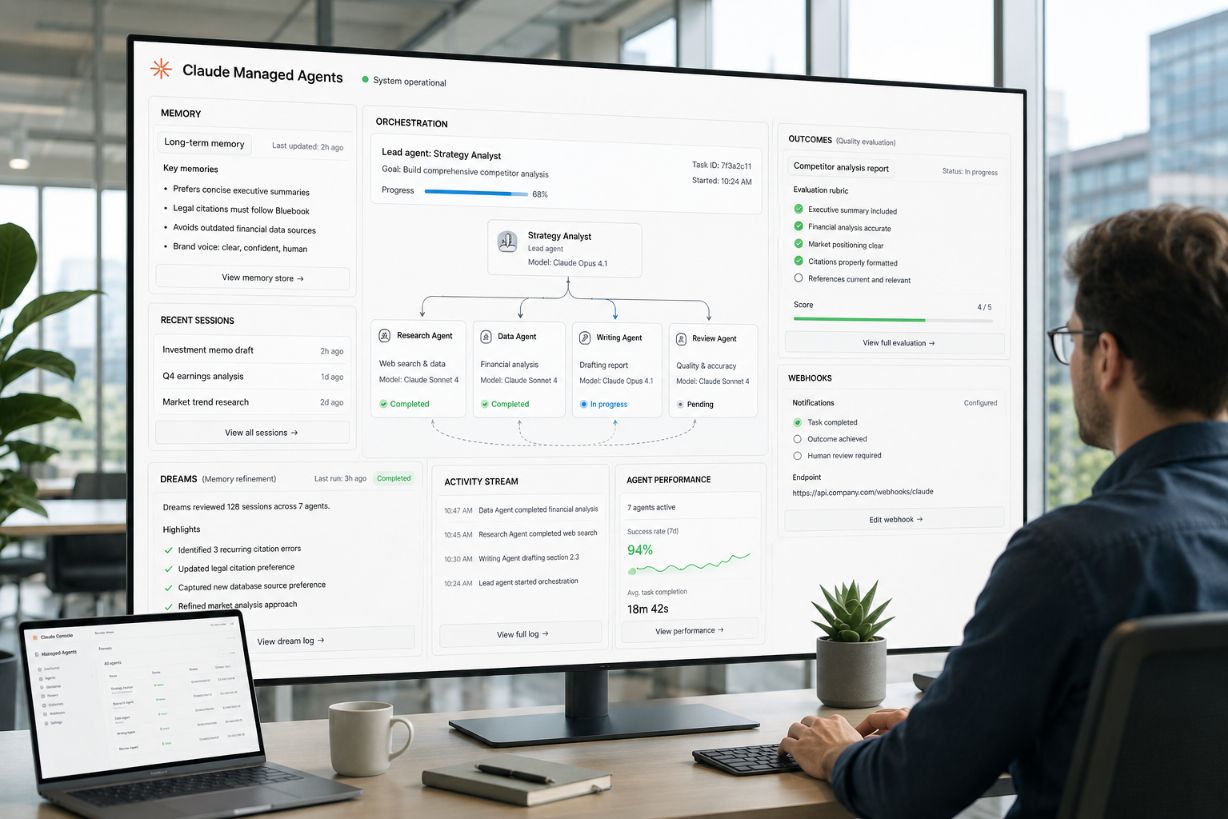

A conceptual visualization of Claude Managed Agents coordinating memory, outcomes and multiagent workflows for long-running AI tasks. AI-generated image via ChatGPT (OpenAI)

Claude Managed Agents Add Dreaming to Improve AI Agent Reliability

Anthropic has launched dreaming in Claude Managed Agents as a research preview, giving developers a new way to improve how AI agents remember past work, evaluate recurring patterns and become more reliable over time. The update affects teams building long-running AI agents that need to complete complex tasks with less step-by-step human steering.

The company also made outcomes, multiagent orchestration and webhooks available to developers building with Managed Agents. For teams deciding whether AI agents are ready for more complex workflows, the update focuses on three practical needs: better memory, clearer quality standards and more coordinated task execution.

In short, Claude Managed Agents are gaining infrastructure for AI agents that need to work beyond a single prompt. Dreaming improves memory between sessions, outcomes gives agents a success rubric, and multiagent orchestration lets a lead agent divide complex work among specialist agents.

Claude Managed Agents are Anthropic’s developer tools for building managed AI agent systems that use memory, evaluation criteria, workflow coordination and specialized agents to complete complex tasks over time.

Key Takeaways: Claude Managed Agents Dreaming and Reliability Features

Claude Managed Agents now combine memory refinement, rubric-based evaluation and multiagent coordination to help developers build AI agents that can improve, check and organize complex work.

Claude Managed Agents now include dreaming as a research preview, giving developers a scheduled process for improving how agents use memory across past sessions

Dreaming reviews agent sessions and memory stores to identify recurring mistakes, repeated workflows and team-level preferences that can improve future agent behavior

Outcomes lets developers define a success rubric so an agent can evaluate work against specific requirements and revise outputs before human review

Multiagent orchestration allows a lead agent to divide complex work among specialist agents with their own model, prompt and tools

Webhooks let developers receive notifications when an agent completes work tied to a defined outcome, making longer-running agent tasks easier to monitor

Harvey, Netflix, Spiral by Every and Wisedocs are using Claude Managed Agents for legal drafting, build-log analysis, writing workflows and document quality checks

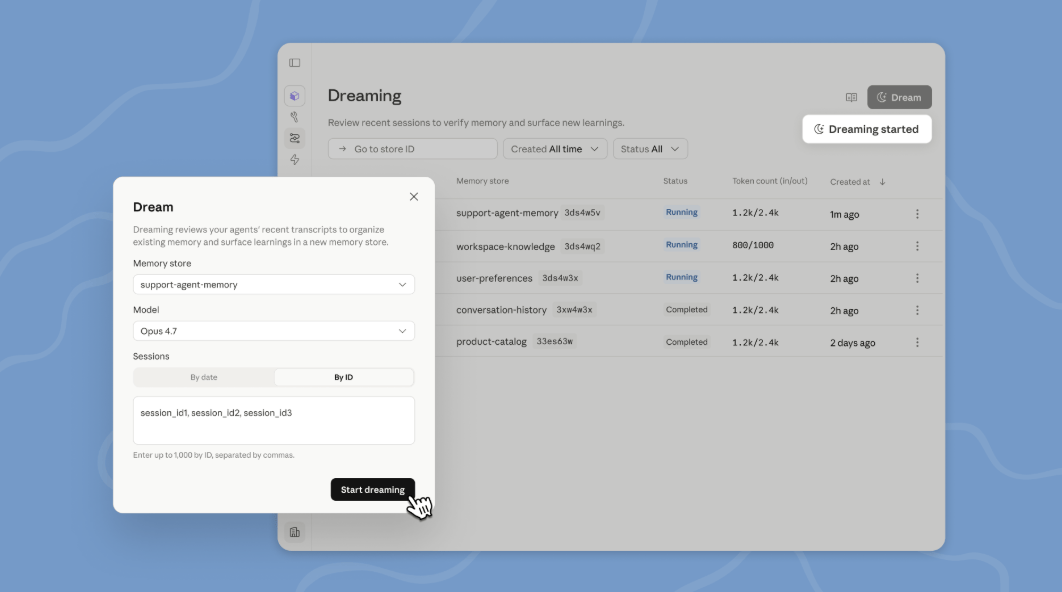

Anthropic Adds Dreaming to Claude Managed Agents Memory

Anthropic introduced dreaming in Claude Managed Agents as a research preview for developers building agents on the Claude Platform. Dreaming extends memory by reviewing previous agent sessions and memory stores, finding patterns and curating what agents should remember so they can self-improve over time.

The process is scheduled, meaning it runs between work sessions rather than only during a single task. Anthropic says developers can choose how much control they want over dreaming. The feature can update an agent’s memory automatically, or developers can review proposed memory changes before they are added. That gives teams a way to balance automation with oversight, especially when agent memory may influence future work quality or team-specific preferences.

Dreaming surfaces patterns that a single agent may not see on its own. According to Anthropic, those patterns can include spotting recurring mistakes, workflows that agents repeatedly converge on and preferences that appear across a team. That makes dreaming especially relevant for teams that use agents over long periods of work, where a single session may not show enough context to improve future performance.

Memory and dreaming work as two parts of a longer-term improvement system. Memory allows an agent to capture what it learns while it works. Dreaming then refines that memory between sessions by pulling shared learnings across agents and keeping memory more focused and up-to-date as it grows.

Dreaming is available in Managed Agents on the Claude Platform, and developers can request access through Anthropic’s announcement page.

Claude Outcomes Add Rubric-Based Grading for Agent Work

Outcomes gives developers a way to define what successful work should look like before an agent starts or completes a task. Instead of relying only on a prompt, developers write a rubric that describes the requirements the agent must satisfy.

Anthropic says a separate grader evaluates the agent’s output against that rubric in its own context window. Because the grader runs separately, Anthropic says it is not influenced by the agent’s reasoning. When the grader finds that something is not correct or complete, it identifies what needs to change, and the agent takes another pass.

The key point: Outcomes turns quality control into part of the agent workflow by giving the agent a measurable standard for success and a separate review process that can trigger revision before a human reviews the result.

Anthropic says agents do their best work when they know what “good” looks like, such as a structural framework, a presentation standard or a set of requirements that need to be met. With outcomes, agents can check their work against that standard and self-correct until the output is strong enough, without requiring a human to review each attempt. Anthropic describes outcomes as especially useful for tasks that require attention to detail, exhaustive coverage or subjective quality checks, including brand voice alignment and visual design guidelines. It also works for subjective quality, such as whether copy matches a brand voice or a design follows visual guidelines.

In Anthropic’s internal testing, outcomes improved task success by up to 10 points over a standard prompting loop, with the largest gains on the hardest problems. The company also reported file-generation improvements of +8.4% task success on docx and +10.1% on pptx in internal benchmarks.

The update also connects outcomes with webhooks. Developers can define an outcome, allow the agent to run and receive a webhook notification when the work is complete.

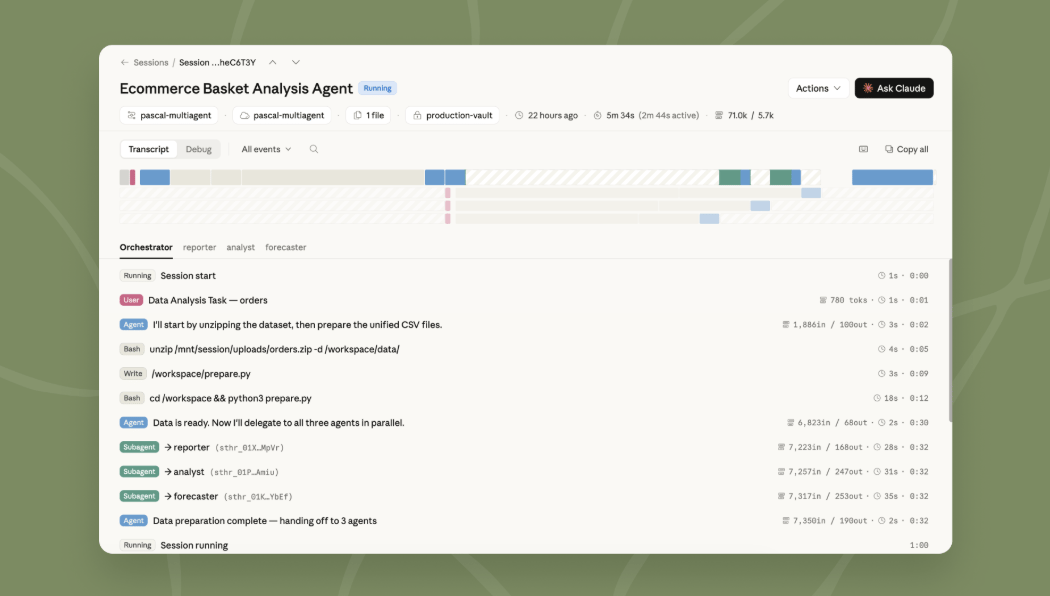

Claude Multiagent Orchestration Lets Lead Agents Delegate Complex Work

Multiagent orchestration gives Claude Managed Agents a way to handle work that may be too large or varied for a single agent to complete well. In this model, a lead agent breaks the job into smaller pieces and delegates each piece to a specialist agent with its own model, prompt and tools. For example, a lead agent can run an investigation while subagents review deploy history, error logs, metrics and support tickets.

The specialist agents work in parallel on a shared filesystem and contribute to the lead agent’s overall context. Anthropic says the lead agent can check back in with other agents during a workflow because events are persistent and every agent remembers what it has done.

Developers can also trace the process in the Claude Console, including which agent performed each step, the order of actions and the reasoning behind delegation and execution. That visibility is important for teams that need to understand not only the final result, but also how an agent system reached that result.

This capability is especially relevant for workflows involving large amounts of information, multiple tools or repeated checks across different systems. Instead of having one agent process everything sequentially, a lead agent can assign the work to multiple specialists and bring the results back into one coordinated context.

Claude Managed Agents Show Use Cases in Legal, Media and Document Workflows

Anthropic highlighted several teams using dreaming, outcomes and multiagent orchestration in real-world workflows.

Harvey uses Managed Agents to coordinate complex legal work, including long-form drafting and document creation. Anthropic says Harvey’s agents use dreaming to remember what they learned between sessions, including filetype workarounds and tool-specific patterns. In Harvey’s tests, completion rates increased by about 6x.

Netflix’s platform team built an analysis agent that processes logs from hundreds of builds across different sources. For changes that affect thousands of applications, the key operational need is finding recurring issues across many builds rather than surfacing every individual error. Anthropic says multiagent orchestration allows the agent to analyze batches in parallel and surface the patterns worth acting on.

Spiral by Every is using multiagent orchestration and outcomes to power the writing agent behind its new API and CLI. The lead agent runs on Haiku, handles incoming requests, asks brief follow-up questions when needed and delegates drafting to subagents running on Opus. When a user asks for multiple drafts, the subagents run in parallel.

Spiral also uses outcomes to enforce writing quality. Each draft is scored against a rubric based on Every’s editorial principles and the user’s voice, both pulled from memory. Anthropic says only drafts that meet the standard are returned.

Wisedocs built a document quality check agent using Managed Agents and outcomes. The company uses outcomes to grade each review against internal guidelines. Anthropic says Wisedocs’ reviews now run 50% faster while staying aligned with team standards.

Claude Managed Agents Availability Includes Research Preview and Public Beta

Anthropic says dreaming is available in research preview as part of Claude Managed Agents. Developers can request access to dreaming through the Claude announcement page.

Outcomes, multiagent orchestration and memory are available in public beta as part of Managed Agents. Developers can learn more through Anthropic’s documentation or use the Claude Console to deploy an agent.

Anthropic did not include new pricing details in the announcement. The company directed developers to the Claude Platform, documentation and Console for access and deployment information.

Q&A: Claude Managed Agents Dreaming, Outcomes and Orchestration

Q: What did Anthropic add to Claude Managed Agents?

A: Anthropic added dreaming to Claude Managed Agents as a research preview and made outcomes, multiagent orchestration and webhooks available to developers. The update gives developers more tools for building AI agents that can improve memory, evaluate work quality and coordinate complex tasks.

Q: What is dreaming in Claude Managed Agents?

A: Dreaming is a scheduled process that reviews agent sessions and memory stores to identify recurring patterns, mistakes and team preferences. Developers can let dreaming update memory automatically or review proposed memory changes before they are added.

Q: How do Claude outcomes help agents check their work?

A: Outcomes let developers define a success rubric that describes what good work should look like. A separate grader checks the agent’s output in its own context window, identifies what needs to change and sends the agent back for another pass when the output does not meet the standard.

Q: How does Claude multiagent orchestration work?

A: Multiagent orchestration allows a lead agent to divide complex work among specialist agents with their own models, prompts and tools. This helps teams run parallel investigations, coordinate work across different systems and trace each agent’s role in the Claude Console.

Q: Is Claude dreaming available now?

A: Dreaming is available as a research preview, and developers can request access through Anthropic. Outcomes, multiagent orchestration and memory are available in public beta as part of Managed Agents.

Q: Who is using Claude Managed Agents?

A: Anthropic named Harvey, Netflix, Spiral by Every and Wisedocs as teams using Claude Managed Agents. Their examples include legal drafting, build-log analysis, writing workflows and document quality checks.

What This Means: Claude Managed Agents Improve AI Agent Reliability

Anthropic’s Managed Agents update focuses on a core requirement for AI agent adoption: whether teams can manage, evaluate and improve agents well enough to trust them with long-running work.

Key point: Claude Managed Agents are becoming managed workflow systems, not just prompt-based assistants. Dreaming improves what agents remember, outcomes define how agents judge success and multiagent orchestration controls how agents divide complex work.

Who should care: Developers, AI product teams, enterprise technology leaders and operations teams should pay attention because these features affect how agent systems are built, tested and monitored. Teams evaluating AI agents need to know whether an agent can improve from prior work, meet a defined standard and show how work was completed.

Why this matters now: AI agents are moving into workflows that require persistence, review and coordination across tools. As agents take on longer-running work, trust depends on whether teams can see what the agent remembered, how the output was checked and how complex work was divided across systems.

What decision this affects: This affects whether organizations build agents as simple assistants or as managed systems with memory, grading, orchestration and traceability. It also affects how teams evaluate claims about AI agent reliability, workflow automation and enterprise readiness.

In short, Anthropic is building more of the management layer around AI agents. The update does not just make agents more capable; it gives teams clearer ways to define good work, inspect agent behavior and build trust in how agent work gets completed over time.

AI agent adoption will depend less on whether agents can act and more on whether organizations can trust the systems managing those actions.

Sources:

Claude - New in Claude Managed Agents: dreaming, outcomes, and multiagent orchestration

https://claude.com/blog/new-in-claude-managed-agentsClaude - Claude Managed Agents: Memory

https://claude.com/blog/claude-managed-agents-memoryAnthropic Docs - Dreams

https://platform.claude.com/docs/en/managed-agents/dreamsClaude - Claude Managed Agents Access Request

https://claude.com/form/claude-managed-agentsAnthropic Docs - Define Outcomes

https://platform.claude.com/docs/en/managed-agents/define-outcomesAnthropic Docs - Webhooks

https://platform.claude.com/docs/en/managed-agents/webhooksAnthropic Docs - Multi-agent

https://platform.claude.com/docs/en/managed-agents/multi-agentClaude Platform - Login

https://platform.claude.com/login

Editor’s Note: This article was created by Alicia Shapiro, CMO of AiNews.com, with writing support, AEO/GEO/SEO optimization, image concept development, and editorial structuring support from ChatGPT, an AI assistant. All final editorial decisions, perspectives, and publishing choices were made by Alicia Shapiro.